What is an MCP Gateway? Key Features and Benefits

An MCP gateway centralizes tool discovery, routing, governance, and execution for AI agents. Learn how Bifrost's MCP gateway powers production-grade agent infrastructure.

As AI agents move from prototypes to production, teams quickly discover that connecting models to external tools is harder than it looks. An MCP gateway solves this by acting as a single control plane between language models and every external system they need to call. Bifrost, the open-source AI gateway by Maxim AI, includes a production-grade MCP gateway that gives engineering teams centralized tool discovery, governance, security, and execution across all of their connected MCP servers, with 11 microseconds of overhead at 5,000 requests per second.

This post covers what an MCP gateway is, why it matters for production agent workflows, the key features that distinguish a true gateway from a basic MCP client, and how Bifrost approaches MCP at scale.

What is an MCP Gateway

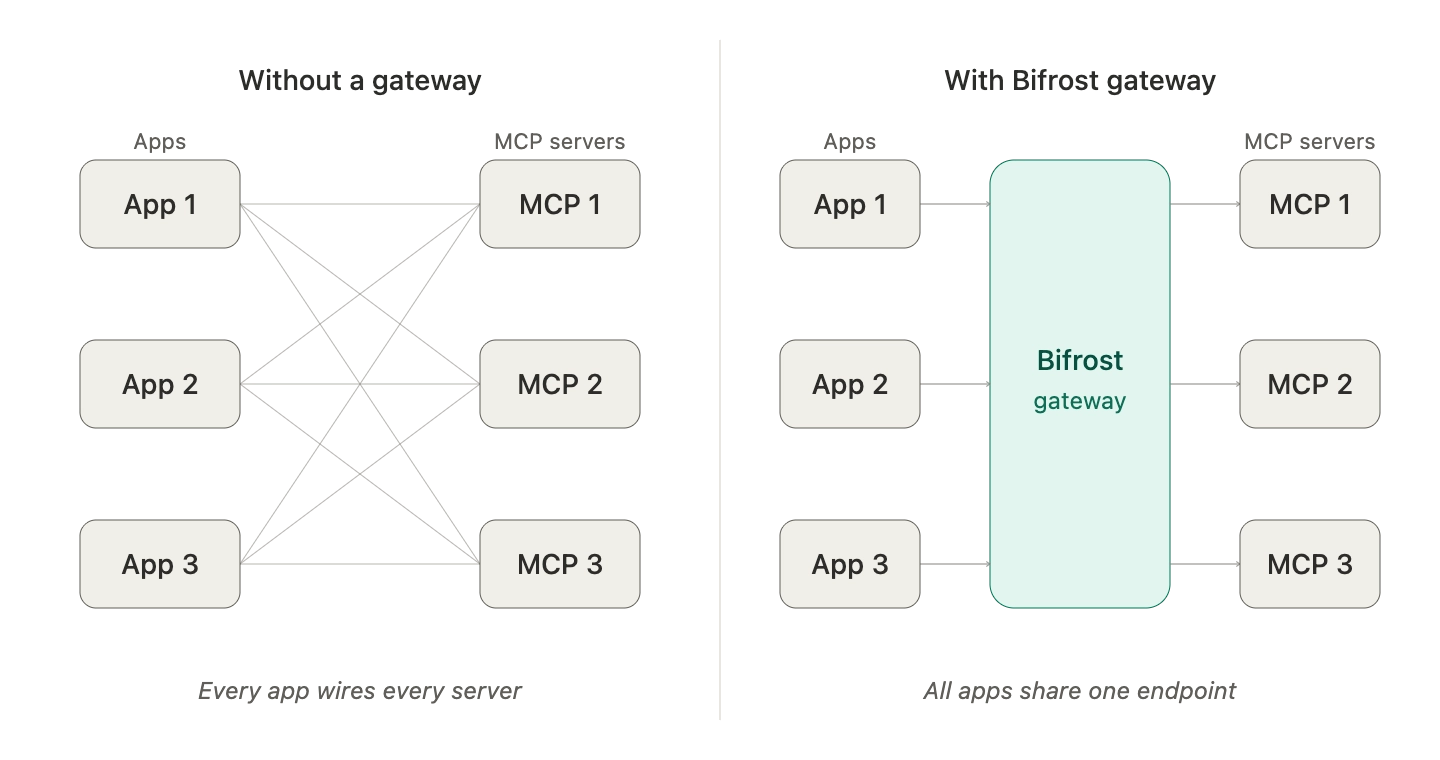

An MCP gateway is a centralized infrastructure layer that connects AI models to external tools through the Model Context Protocol, handling tool discovery, routing, authentication, governance, and execution from a single endpoint. It sits between LLM clients (chat apps, coding agents, autonomous workflows) and the growing ecosystem of MCP servers that expose filesystems, databases, APIs, and custom business logic to AI models.

The Model Context Protocol itself is an open standard introduced by Anthropic in late 2024 that standardizes how AI applications connect to external data sources and tools. As the official MCP specification describes, MCP enables seamless integration between LLM applications and external systems through a client-server architecture. Adoption has been rapid: in Anthropic's own words, MCP has become the de-facto standard for connecting agents to tools and data, with thousands of community-built servers and SDKs across all major programming languages.

A gateway extends MCP from a one-to-one protocol into multi-tenant production infrastructure.

Why MCP Gateways Matter for AI Teams

Without a gateway, every MCP integration is direct and uncoordinated. Each agent or assistant configures its own MCP servers independently, which leads to predictable problems at scale:

- Configuration sprawl: every coding agent, app, or workflow maintains its own list of MCP servers, credentials, and approval rules.

- No unified governance: there is no single place to enforce who can use which tool, what budgets apply, or what audit data is captured.

- Token bloat: when many MCP servers are connected directly, full tool definitions are loaded into every prompt, consuming significant context and increasing latency. Anthropic's engineering team has noted that loading all tool definitions upfront slows down agents and increases costs as the number of connected tools grows.

- Fragmented security: OAuth flows, secret rotation, and tool filtering must be implemented per integration rather than centrally.

- Limited observability: tool calls happen across disconnected processes with no consolidated trace of what executed, when, or why.

An MCP gateway consolidates all of this. Tools are registered once and exposed through a single gateway URL. Governance, auth, filtering, observability, and execution policies live at the gateway layer, not in every agent. This is the difference between an experimental MCP setup and a production agent platform.

How Bifrost Approaches MCP Gateway

Bifrost acts as both an MCP client and an MCP server. It connects to external MCP-compatible tool servers (filesystem, databases, search, internal APIs) and exposes a single endpoint that any MCP client can connect to, including Claude Desktop, Claude Code, Codex CLI, Gemini CLI, and Cursor.

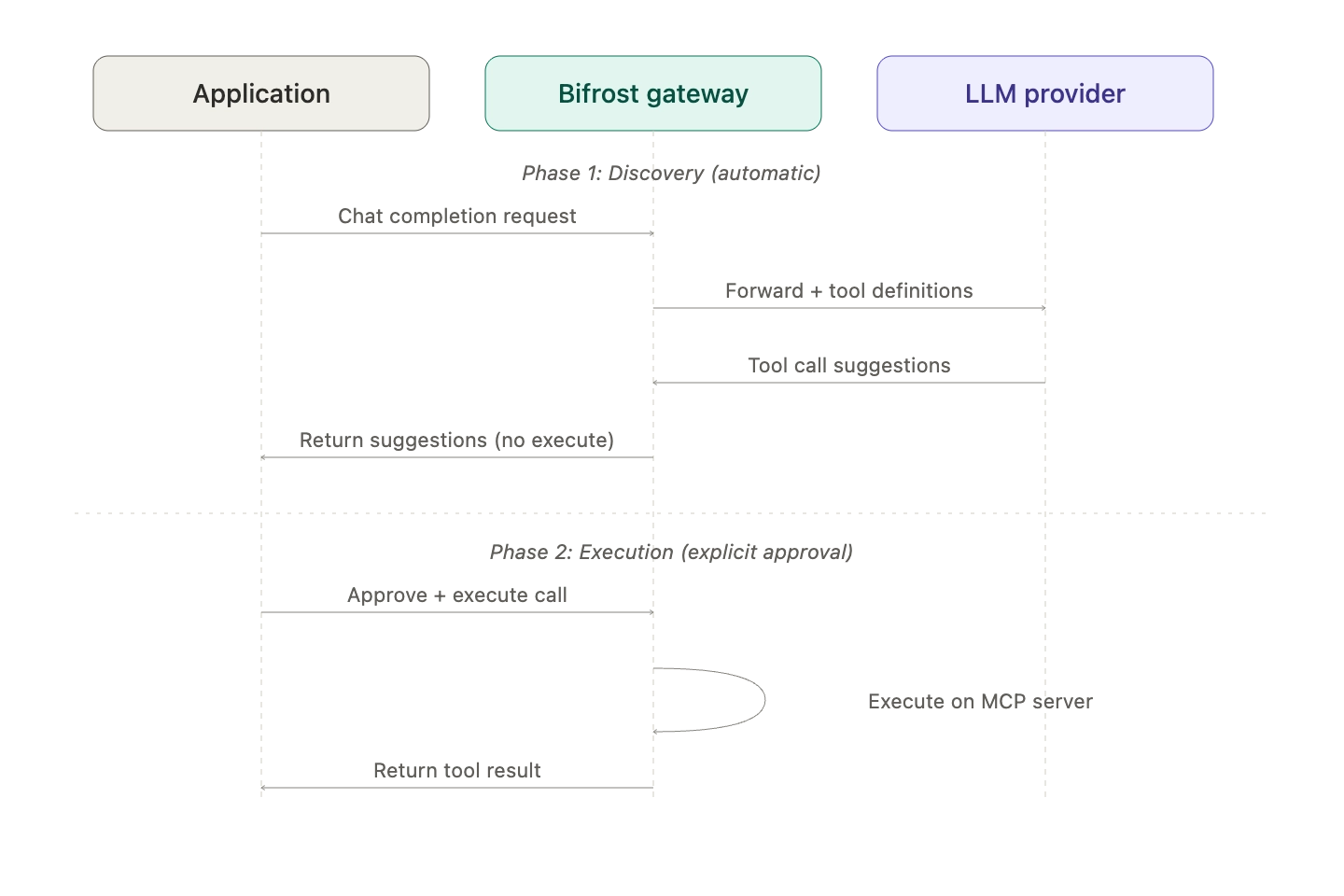

The model interaction stays clean: an application sends a standard chat completion request, Bifrost injects the discovered MCP tools into the request, and the LLM returns tool call suggestions. By default, those suggestions are not auto-executed. The application explicitly approves and triggers execution through a separate API call. This stateless, explicit pattern preserves human oversight on potentially dangerous operations while keeping the orchestration logic predictable.

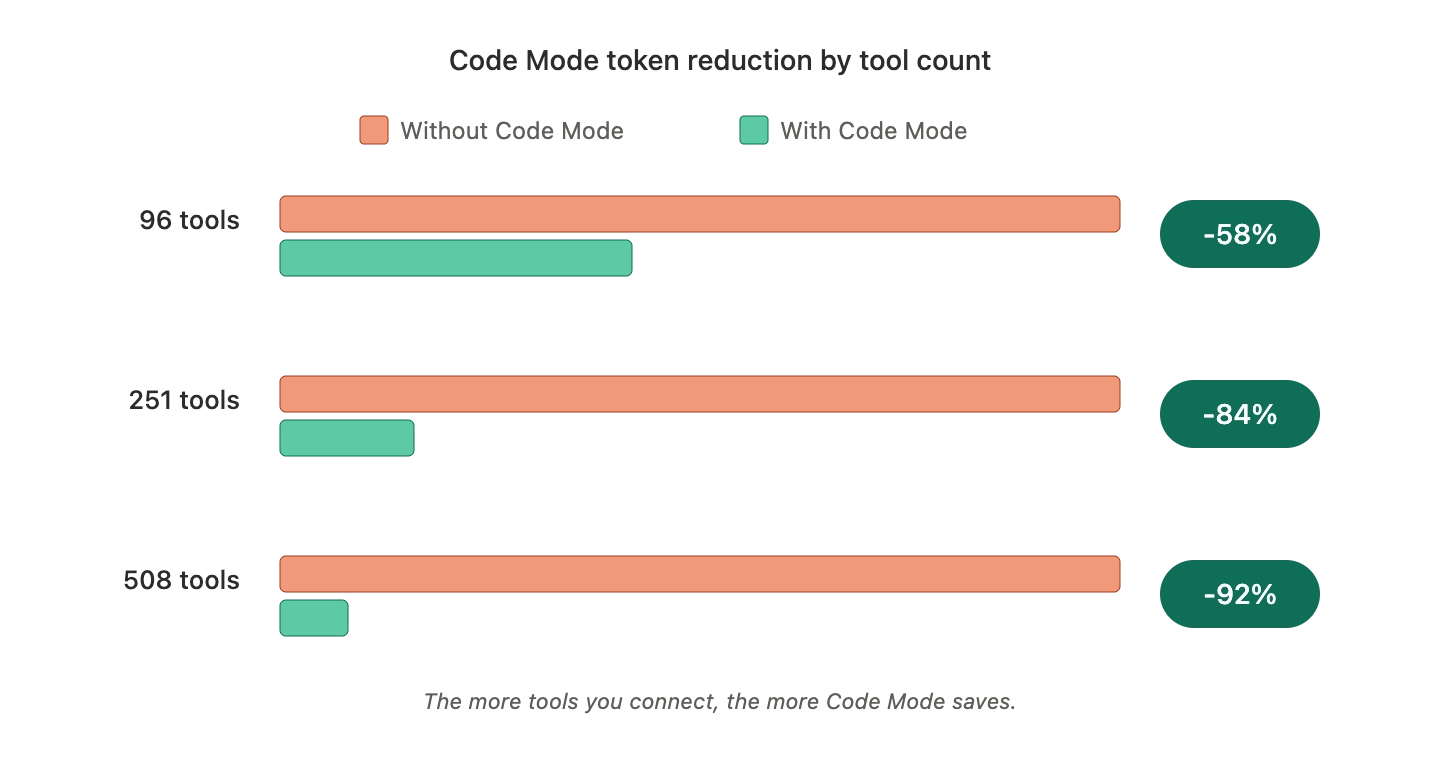

For teams that want autonomous behavior, Bifrost offers an agent mode that allows configurable auto-execution for specific tools. For teams running large tool ecosystems, Bifrost offers Code Mode, which lets the model write Python that orchestrates many tools inside a sandbox rather than receiving full tool definitions in the prompt. Code Mode reduces token usage by more than 50% and execution latency by 40 to 50% compared to classic MCP tool calling.

Key Features of a Production-Grade MCP Gateway

A production MCP gateway has to do more than relay tool calls. The features below define what separates a real gateway from a thin protocol shim, and each is part of Bifrost's MCP gateway.

- Single gateway endpoint: every connected MCP server is exposed through one URL. Clients connect once and discover the entire tool ecosystem automatically.

- Multi-transport connectivity: support for STDIO, HTTP, and SSE-based MCP connections with automatic retry and exponential backoff for transient failures.

- Centralized OAuth and auth: OAuth 2.0 authentication with automatic token refresh, PKCE, and per-end-user upstream credentials.

- Tool filtering: granular control over which MCP tools are visible to which virtual key, agent, or request.

- Tool hosting: register custom tools and expose them via MCP without standing up separate servers.

- Explicit execution by default: tool calls from LLMs are treated as suggestions until the application explicitly approves them, preserving auditability.

- Agent mode: configurable auto-execution for trusted, low-risk tools.

- Code Mode: model-written Python orchestration for token-efficient multi-tool workflows.

- Audit logging: every tool suggestion, approval, and execution is captured for compliance and debugging.

- Governance integration: tool access is governed by the same virtual keys that control model access, budgets, and rate limits.

Key Benefits of Using an MCP Gateway

Centralizing MCP behind a gateway delivers measurable improvements in cost, reliability, security, and developer velocity.

- Lower token costs at scale: Bifrost's Code Mode benchmarks show 58% token reduction at 96 tools, 84% at 251 tools, and 92% at 508 tools versus passing every tool definition to the model directly. For details on the architecture and benchmarks, see the Bifrost MCP gateway deep dive.

- Predictable agent behavior: when orchestration moves from prompt-time tool selection to deterministic code execution, agent workflows become easier to reason about and reproduce.

- Unified governance: virtual keys integrate model access and tool access into a single policy layer, preventing cases where an agent has permission to call a model but no enforced limits on what tools it can run.

- Centralized security posture: OAuth, secret managers (HashiCorp Vault, AWS Secrets Manager, Google Secret Manager, Azure Key Vault), and content guardrails live at the gateway, not in every agent.

- Observability across all tool calls: every MCP execution is captured with metadata, providing a complete audit trail and operational telemetry without per-agent instrumentation work.

- Lower integration cost: instead of wiring MCP servers into every coding agent and assistant, teams point all clients at the gateway URL and update the registry centrally.

- Performance headroom: 11 microseconds of overhead at 5,000 RPS means the gateway adds negligible latency, even under sustained production load.

Key Considerations for MCP Gateway Implementation

Choosing and deploying an MCP gateway involves trade-offs that engineering teams should evaluate before committing.

- Execution model: decide whether your workflows need explicit approval, autonomous agent mode, or Code Mode. Most production systems use a mix, with sensitive operations gated behind explicit execution and routine read-only tools auto-executed.

- Scope of tool access: tool filtering should map to a clear authorization model. Treat tool access the same way you treat database access: least privilege, scoped per consumer.

- Auth strategy: for tools that act on user data, federated OAuth where each end-user authenticates under their own credentials avoids the operational and security burden of shared service accounts.

- Observability requirements: confirm the gateway integrates with your existing telemetry. Bifrost emits Prometheus metrics, supports OTLP for distributed tracing, and integrates with Grafana, New Relic, Honeycomb, and Datadog.

- Deployment topology: regulated industries often need in-VPC deployments and clustering for high availability. Confirm the gateway supports both.

- Token efficiency at scale: if you expect to connect dozens or hundreds of MCP servers, plan for Code Mode or an equivalent code-execution pattern. Loading every tool definition into every prompt is not viable past a few dozen tools.

How MCP Gateways Fit into Broader AI Infrastructure

A gateway is not a standalone product. It is one layer of a complete agent infrastructure stack. In Bifrost's case, MCP runs alongside automatic failover across 20+ LLM providers, semantic caching, rate limits, and built-in observability. The same virtual key that limits how many tokens an agent can spend on a model also controls which MCP tools the agent can invoke. This unified architecture is what makes the gateway useful in production rather than as a demo.

For coding agents specifically, the same gateway endpoint serves Claude Code, Codex CLI, Gemini CLI, and Cursor without per-agent reconfiguration. Connect once, govern everywhere.

Getting Started with Bifrost's MCP Gateway

Bifrost's MCP gateway gives engineering teams a single, governed control plane for AI agent tool access, with the performance characteristics required for production workloads. Teams can install Bifrost in 30 seconds with npx -y @maximhq/bifrost, connect their MCP servers, and start routing tool calls through a single endpoint with full audit logging, governance, and Code Mode efficiency.

To see how Bifrost's MCP gateway can simplify your AI agent infrastructure, book a demo with the Bifrost team.