How to Reduce MCP Tool-Call Token Costs 50%+ with Code Mode

MCP tool-call token costs explode as agents add servers. Code Mode cuts token usage 50%+ by letting models write code instead of calling tools directly.

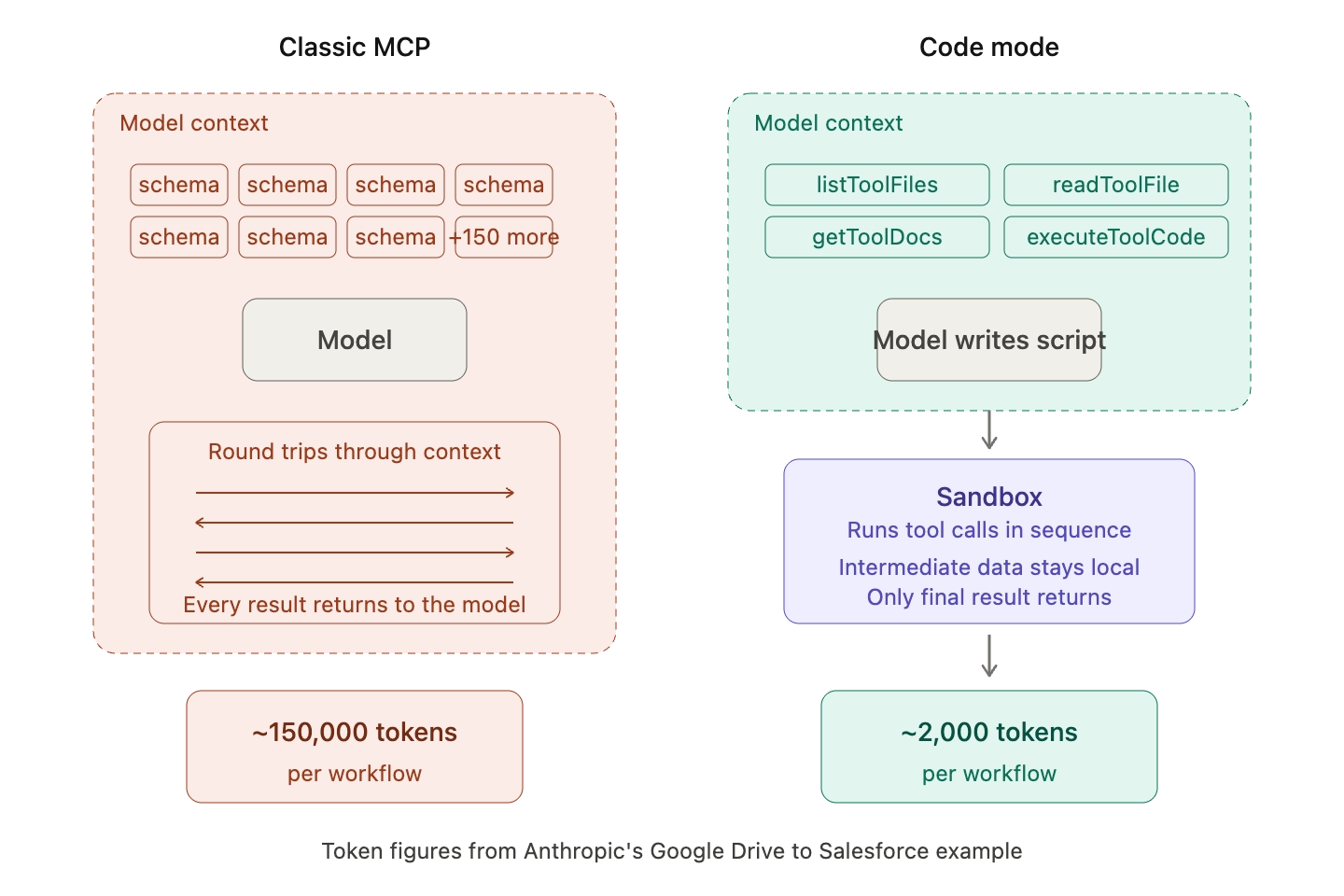

MCP tool-call token costs have become one of the largest, least-visible line items in production AI spend. Every time an agent connects to a Model Context Protocol (MCP) server, the client loads the full list of tool definitions into the model's context window before the first user prompt is even processed. With five servers exposing thirty tools each, that is 150 tool schemas the model parses on every turn, whether it uses them or not. Code Mode, a pattern first documented by Anthropic and Cloudflare and now built natively into Bifrost's MCP gateway, eliminates this overhead by letting the model write a short script that orchestrates tools in a sandbox instead of calling them one at a time through the context window.

Why MCP Tool-Call Token Costs Grow So Quickly

Most MCP clients follow the same default pattern: load every tool definition from every connected server into the prompt, then let the model pick which tool to call. That pattern breaks at scale for two reasons documented by Anthropic's engineering team in their post on code execution with MCP.

First, tool definitions crowd the context windodw. Each tool carries a description, a parameter schema, and return-type metadata, and those tokens are paid on every request. When an agent is connected to thousands of tools, it processes hundreds of thousands of tokens before reading the user's message.

Second, intermediate tool results travel through the model between steps. Anthropic illustrates this with a Google Drive to Salesforce workflow: the model pulls a meeting transcript from Drive, then rewrites it into a Salesforce update. Both times, the full transcript flows through context. For a two-hour sales meeting, that alone can add 50,000 tokens to a single run.

The usual advice to "trim your tool list" is not a fix. It is a tradeoff where teams sacrifice capability to control cost. Code Mode solves the underlying problem instead.

What Is Code Mode in an MCP Gateway

Code Mode is an MCP execution pattern in which the model writes a short script, typically in TypeScript, Python, or a constrained variant such as Starlark, and the gateway runs that script in a sandbox to orchestrate all tool calls. The model never loads the full tool catalog into its context window and never sees intermediate results unless it explicitly asks for them.

The insight behind Code Mode is counterintuitive but well-supported: LLMs are better at writing code to call tools than at calling tools directly through JSON function-calling syntax. Anthropic and Cloudflare reached the same conclusion independently and published their findings within weeks of each other. Cloudflare built a variant that exposes 2,500+ API endpoints to agents using just two tools and roughly 1,000 tokens. Anthropic demonstrated a 98.7% token reduction, from 150,000 tokens down to 2,000, on the Drive-to-Salesforce example.

Bifrost's Code Mode builds on this pattern with two implementation choices suited to production gateways. It uses Python stubs rather than TypeScript, because LLMs see more Python training data than any other language, and it adds a dedicated documentation meta-tool so agents can retrieve deeper interface details only when needed.

How Code Mode Reduces MCP Token Costs in Practice

With Code Mode enabled, Bifrost stops injecting every tool definition into the model's context. Instead, it exposes four meta-tools that the model uses to discover, inspect, and run tools on demand:

- listToolFiles: discovers which MCP servers and tools are available

- readToolFile: loads the Python function signatures for a specific server or tool

- getToolDocs: fetches detailed documentation for a tool before it is used

- executeToolCode: runs an orchestration script against live tool bindings in a sandboxed Starlark interpreter

The model navigates this catalog as a virtual filesystem of lightweight stub files. It reads only the interfaces it needs, writes a short script that chains the necessary calls, and the gateway executes everything in sequence without round-tripping intermediate results through the LLM.

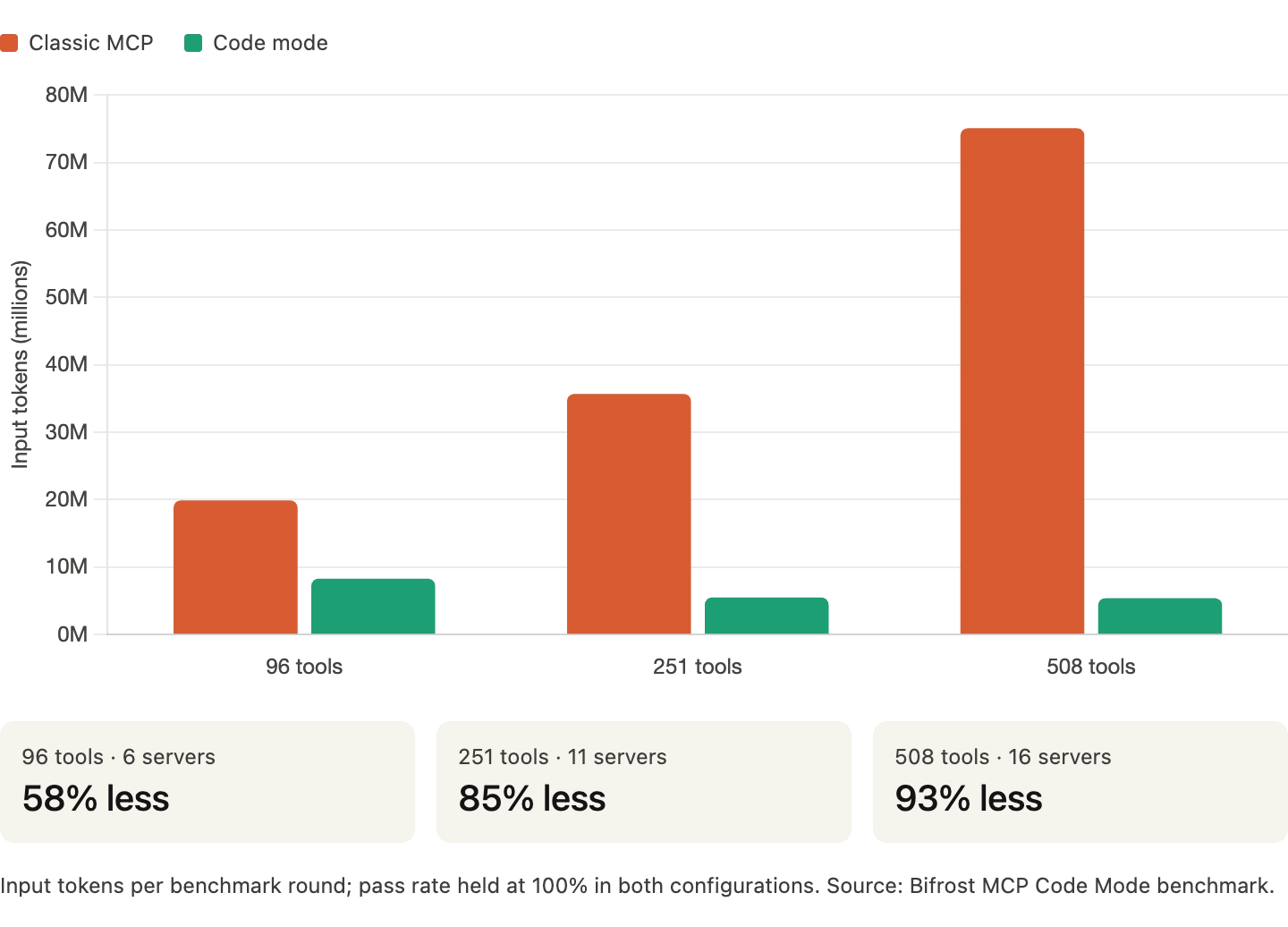

Bifrost's own controlled benchmarks show how the savings compound as the MCP footprint grows. Running 64 identical queries against the same set of tools with Code Mode on and off:

- 96 tools across 6 servers: 58% reduction in input tokens, 56% reduction in estimated cost

- 251 tools across 11 servers: 84% reduction in tokens, 83% reduction in cost

- 508 tools across 16 servers: 93% reduction in tokens, 92% reduction in cost

Pass rate held at 100% in both configurations across all three rounds. Accuracy is not traded away to get the savings. The full methodology and raw numbers are documented in the Bifrost MCP Gateway benchmark report.

The shape of these results matches what independent teams have observed. A third-party benchmark by AIMultiple using GPT-4.1 and the Bright Data MCP server measured a 78.5% reduction in input tokens with the code execution pattern, while keeping a 100% success rate across 50 runs per task.

Beyond Token Savings: Additional Benefits of Code Mode

Token reduction is the headline number, but the operational effects of Code Mode reach further.

- Lower latency: Cloudflare and Anthropic both report 30 to 40% faster end-to-end execution because the model skips multiple round trips through the agent loop. Bifrost's benchmarks show the same pattern: fewer turns, less waiting.

- Fewer round trips: a classic MCP workflow that requires eight turns can collapse to a single executeToolCode call, which is a 75% reduction in sequential model invocations.

- Deterministic execution: the Starlark sandbox has no imports, no file I/O, and no network access outside of the whitelisted tool bindings. Code paths are auditable, repeatable, and cheap to run automatically.

- Privacy by default: intermediate results stay in the sandbox unless the script explicitly logs or returns them. PII, database dumps, and sensitive payloads no longer need to flow through the model context to get from one tool to another.

- Compounding savings at scale: the cost of classic MCP grows with the number of connected servers. The cost of Code Mode is bounded by what the model actually reads for a given task, so the gap widens as the fleet grows.

These compounding effects are why Cloudflare and Anthropic both describe the context-window dynamic as the single most important efficiency problem in production agent infrastructure today.

When to Use Code Mode vs Classic MCP Tool Calls

Code Mode is the right default for any multi-tool, multi-step agent workflow. The specific cases where it pays off most:

- Agents connected to three or more MCP servers

- Workflows that chain four or more tool calls per task

- Tasks that pass large payloads (transcripts, spreadsheets, database rows) between tools

- Long-running agent loops where the full tool list would otherwise be reloaded on every turn

- Claude Code, Cursor, and other CLI agents and editors running against the gateway

Classic direct tool calls still make sense for narrow, single-purpose agents with fewer than ten tools, or for interactive flows where a human approves each call. Bifrost supports both modes on the same gateway, configurable per MCP client, so teams can migrate incrementally rather than rewriting everything at once.

Implementing Code Mode with Bifrost's MCP Gateway

Enabling Code Mode on Bifrost takes four steps in the dashboard:

- Add an MCP client: navigate to the MCP section, add the upstream server (HTTP, SSE, STDIO, or in-process), and let Bifrost discover its tools.

- Toggle Code Mode on: open the client settings and enable Code Mode. No schema changes, no redeployment. From that point, the model receives only the four meta-tools instead of every tool definition.

- Allowlist auto-executable tools: the read-only meta-tools run automatically. executeToolCode becomes auto-executable once every tool its generated script calls is on the allowlist, which keeps write operations behind an approval gate by default.

- Scope access with virtual keys: issue scoped credentials per team, customer, or agent and grant them only the tools they are allowed to call. The scoping works at the tool level, not just the server level, so the same server can expose filesystem_read to one key and withhold filesystem_write from another.

Connecting Claude Code, Cursor, or any other MCP client to Bifrost takes one line: point the client at the gateway's /mcp endpoint. Every connected MCP server becomes available through that single URL, governed by the virtual key.

The Future of Efficient Agent Infrastructure

The direction of travel is clear. Agents are no longer calling one or two tools. They are orchestrating dozens of systems through dozens of MCP servers, and the naive pattern of loading every tool definition into context on every request does not scale past a handful of integrations. Anthropic, Cloudflare, and the broader MCP ecosystem have converged on the same fix: treat tools as code, let the model write a program, run it in a sandbox, and let only the final result touch the context window. Independent performance benchmarks consistently measure 50 to 90%+ reductions in input tokens once this pattern is in place, with no drop in task accuracy.

Bifrost implements Code Mode as a native primitive of its MCP gateway, alongside tool filtering, per-tool cost tracking, OAuth 2.0 authentication, and immutable audit logs for every tool execution. Token cost, latency, governance, and observability are handled by the same layer, through the same /mcp endpoint, with a single control plane.

Start Reducing MCP Token Costs with Bifrost

MCP tool-call token costs compound silently until they dominate the agent bill. Code Mode rewrites the math: fewer schemas in context, fewer intermediate round trips, and sandboxed execution that scales to hundreds of tools without growing the per-request token budget. To see what Code Mode looks like against your own MCP footprint and how much it would save, book a demo with the Bifrost team.