Top 5 AI Gateways for Managing MCP Costs at Scale

Compare the top AI gateways for managing MCP costs in 2026, from Code Mode token optimization to virtual key governance, and find the right fit for production agent workflows.

AI agents in production connect to dozens of external tools through the Model Context Protocol. Without a centralized AI gateway for managing MCP costs, every agent loads every tool definition into the LLM's context window on every request. Connect five servers with 30 tools each, and 150 tool definitions consume the majority of the token budget before the model reads a single word of the actual prompt. At Gartner's projected scale of 40% of enterprise applications integrating task-specific AI agents by the end of 2026, this token overhead becomes a production-critical infrastructure problem.

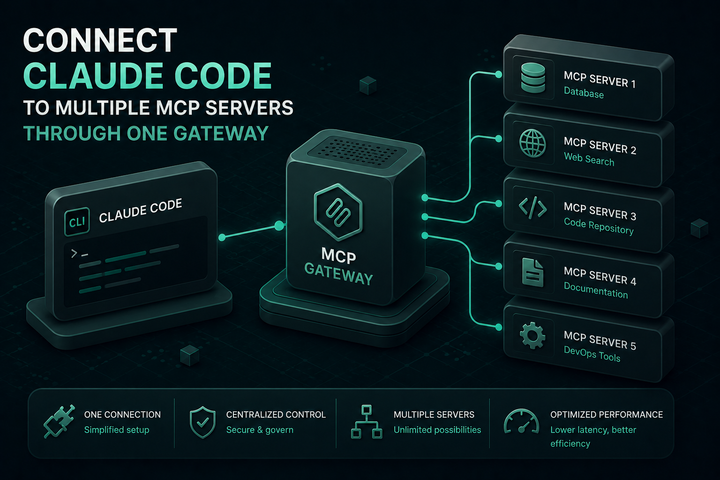

An MCP gateway sits between AI agent clients and MCP tool servers, centralizing authentication, tool routing, observability, and cost governance. But not all gateways address MCP costs the same way. Some optimize at the token level through code execution patterns. Others focus on access control and rate limiting to cap spend indirectly. The five gateways below represent the leading approaches to managing MCP costs at scale in 2026, evaluated on token optimization, governance depth, performance, and production readiness.

Key Criteria for Evaluating AI Gateways for MCP Cost Management

Before comparing specific gateways, teams should evaluate each option against these cost-related criteria:

- Token optimization: Does the gateway reduce the number of tokens consumed per MCP request? This is the highest-impact lever for managing MCP costs at scale. Gateways that implement code execution patterns (where the LLM writes code to orchestrate tools instead of calling them directly) can reduce token usage by 50% or more.

- Tool-level access control: Can administrators restrict which tools each consumer can access? Fewer tools in the context window means fewer tokens per request. Per-consumer tool filtering also prevents unauthorized tool usage that would otherwise inflate costs.

- Per-tool cost tracking: MCP costs extend beyond tokens. If tools call paid external APIs (search, enrichment, code execution), each invocation has a price. Gateways that track cost at the tool level provide a complete picture of agent run economics.

- Budget management and rate limiting: Can the gateway enforce spending ceilings per team, per user, or per project? Rate limits and budget caps prevent runaway costs from misconfigured agents.

- Performance overhead: Gateway latency adds to every request. A gateway that introduces meaningful overhead compounds costs by increasing time-to-first-token and total inference duration.

- Audit and observability: Can the team trace every tool call to a specific consumer, with arguments, results, and latency? This data is essential for identifying cost anomalies and optimizing agent workflows.

1. Bifrost

Bifrost is a high-performance, open-source AI gateway built in Go by Maxim AI. It operates as both an MCP client and server, connecting to external MCP servers via STDIO, HTTP, or SSE while exposing all discovered tools through a single gateway endpoint. What sets Bifrost apart for MCP cost management is its dual function as an LLM gateway and MCP gateway in a single binary, combined with Code Mode, the most aggressive token optimization capability available in any MCP gateway today.

MCP cost management capabilities:

- Code Mode: Instead of injecting every tool definition into the LLM's context, Code Mode replaces the entire tool catalog with four meta-tools. The LLM discovers tools on demand, reads only the definitions it needs, and writes a short Python script executed in a sandboxed Starlark interpreter. At 500 tools across 16 servers, Code Mode reduces per-query token usage by roughly 14x (1.15M tokens to 83K tokens), a 92% reduction with no accuracy tradeoff. At smaller scale (5 servers, 100 tools), the reduction is approximately 50% with 3 to 4x fewer LLM round trips.

- Virtual key governance: Virtual keys scope which MCP tools each consumer can access at the tool level. Fewer tools in context means fewer tokens per request. Combined with rate limits and hierarchical budget controls, teams can cap MCP spend per user, team, or customer.

- Per-tool cost tracking: Bifrost tracks cost at the tool level using a configurable pricing model. These appear in logs alongside LLM token costs, providing a unified view of what each agent run actually cost.

- MCP tool filtering: Tool filtering enforces strict allow-lists per virtual key. Allow

database_querywhile blockingdatabase_delete. The model never receives definitions for tools outside the consumer's scope. - Audit logs: Every tool execution captures tool name, server, arguments, result, latency, virtual key, and parent LLM request for SOC 2, GDPR, HIPAA, and ISO 27001 compliance.

- Performance: 11 microseconds of overhead at 5,000 requests per second. The Go-based architecture avoids the latency penalties of interpreted-language gateways.

- Unified LLM and MCP gateway: Bifrost handles both model routing (failover, load balancing, semantic caching across 20+ providers) and MCP tool orchestration in a single deployment. Teams see LLM token costs and MCP tool costs in one audit log under one access control model.

Bifrost is open source under Apache 2.0 and available on GitHub. Enterprise features include clustering, in-VPC deployment, vault support, and guardrails.

Best for: Teams running multiple MCP servers at scale that need the deepest token optimization (Code Mode), unified LLM and MCP cost visibility, and enterprise governance without vendor lock-in.

2. Cloudflare MCP Server Portals

Cloudflare extended its Workers platform with MCP Server Portals, a managed gateway that aggregates multiple MCP servers behind a single URL. Developers register servers with Cloudflare, and clients configure one Portal endpoint instead of individual server URLs. Cloudflare also launched its own Code Mode implementation in April 2026, using a JavaScript-based sandbox powered by Workers to reduce token consumption.

MCP cost management capabilities:

- Code Mode (recently launched): Cloudflare's Code Mode generates JavaScript that executes against MCP server schemas in a sandboxed Worker. Demonstrated benchmarks showed 32% token reduction for simple tasks and 81% for complex batch operations. The approach uses a fixed token footprint regardless of how many API endpoints exist behind the gateway.

- AI Gateway integration: Cloudflare's AI Gateway provides rate limiting and cost controls for LLM traffic flowing through the platform, including token consumption limits per employee.

- Zero Trust access control: MCP Server Portals integrate with Cloudflare Access for identity-based authorization. Teams can enforce which users or groups can access specific MCP servers.

- Shadow MCP detection: Cloudflare Gateway can discover unauthorized remote MCP servers using DLP-based body inspection, addressing cost exposure from unmanaged MCP usage.

Limitations for MCP cost management: Cloudflare's MCP capabilities are distributed across multiple products (MCP Server Portals, AI Gateway, Access, Gateway) rather than unified in a single control plane. Tool-level filtering and per-tool cost tracking at the granularity available in purpose-built MCP gateways are not currently documented. Code Mode is newer to the platform compared to gateways that have had it in production longer.

Best for: Teams already running workloads on Cloudflare's network that want a managed, globally distributed MCP layer without operating gateway infrastructure.

3. Kong AI Gateway

Kong added MCP support through the AI MCP Proxy plugin in Gateway 3.12. The plugin acts as a protocol bridge, translating between MCP and HTTP so that MCP-compatible clients can call existing REST APIs through Kong without rewriting them as MCP servers. Kong also supports proxying to upstream MCP servers and aggregating multiple servers behind a single endpoint.

MCP cost management capabilities:

- Rate limiting: Kong's existing rate limiting plugins (including AI Rate Limiting Advanced) apply to MCP traffic, allowing teams to cap token consumption and request volume per consumer.

- ACL-based tool filtering: The AI MCP Proxy plugin supports access control lists at both the default level and per-tool level. Consumers only interact with tools appropriate to their role.

- MCP-specific Prometheus metrics: Kong exposes MCP traffic metrics that integrate with existing Grafana or Datadog dashboards, enabling cost monitoring alongside API traffic.

- OAuth 2.1 support: The AI MCP OAuth2 plugin handles authentication for MCP server connections.

- REST-to-MCP conversion: Teams can expose existing REST APIs as MCP tools without building dedicated MCP servers, potentially reducing infrastructure costs.

Limitations for MCP cost management: Kong does not implement Code Mode or equivalent token optimization at the infrastructure layer. MCP support was added to a mature API management product, so the tool-level cost tracking and token reduction capabilities available in MCP-native gateways are not present. The AI MCP Proxy plugin is part of Kong's enterprise AI Gateway offering.

Best for: Organizations with existing Kong deployments that want to extend their API governance model to cover MCP traffic without introducing separate infrastructure.

4. Docker MCP Gateway

Docker's MCP Gateway applies familiar container orchestration workflows to MCP server management. It containerizes MCP servers for isolation and provides a gateway layer for routing, basic policy enforcement, and credential management. The open-source project follows Docker's infrastructure-as-code patterns.

MCP cost management capabilities:

- Container isolation: Each MCP server runs in its own container with resource limits (CPU, memory), preventing any single server from consuming disproportionate resources.

- Centralized credential management: Credentials are managed at the gateway level rather than scattered across individual server configurations, reducing the risk of credential sprawl that can lead to unauthorized (and uncounted) tool usage.

- Basic routing and policy enforcement: The gateway routes requests to the appropriate containerized MCP server and applies basic policies.

Limitations for MCP cost management: Docker MCP Gateway does not include token optimization features like Code Mode. There is no built-in per-tool cost tracking, budget management, or granular tool-level access control beyond what container networking provides. For advanced cost governance, teams need to layer additional systems (identity management, audit logging, budget controls) on top of the container infrastructure. Scaling beyond what Docker or Kubernetes provides requires additional orchestration knowledge.

Best for: Teams with strong container operations experience that want an open-source, self-hosted MCP gateway using familiar Docker patterns, and who will build cost governance on top of the infrastructure layer.

5. MCPX (Lunar.dev)

MCPX is Lunar.dev's open-source MCP gateway focused on enterprise governance and security. It provides a governed entry point for all agent-to-tool interactions, with access control, auditability, and policy enforcement as primary design goals. Licensed under MIT, it can be deployed via Docker in minutes.

MCP cost management capabilities:

- Access control depth: MCPX enforces permissions at the server level and tool level, reducing the number of tools exposed to each consumer and the corresponding token overhead.

- Audit trails: Immutable, full-chain logs attribute each action to both the agent that executed it and the user it acted on behalf of. These logs enable cost anomaly detection and usage analysis.

- Policy enforcement: Custom policies can block unsafe or expensive operations before they reach MCP servers.

- Low latency: Published benchmarks report approximately 4ms p99 latency.

Limitations for MCP cost management: MCPX does not implement Code Mode or equivalent token-level optimization. Per-tool cost tracking with configurable pricing models is not documented. Budget management and hierarchical spending controls are not core features. The primary focus is governance and security rather than token economics.

Best for: Security-focused teams that need fine-grained access control and immutable audit trails for MCP traffic, with cost management addressed primarily through access restrictions.

Comparing MCP Cost Management Across Gateways

The five gateways above take fundamentally different approaches to MCP cost management:

- Token-level optimization (Code Mode): Bifrost provides the most mature implementation with 50 to 92% token reduction depending on tool count. Cloudflare recently launched its own Code Mode with demonstrated reductions of 32 to 81%. Kong, Docker, and MCPX do not implement token-level optimization at the gateway layer.

- Per-tool cost tracking: Only Bifrost tracks both LLM token costs and external API costs at the tool level in a unified log. Other gateways provide request-level metrics but not tool-level cost attribution.

- Budget controls: Bifrost offers hierarchical budget management at the virtual key, team, and customer level. Kong provides rate limiting. Cloudflare applies token limits through AI Gateway. Docker and MCPX rely on external systems for budget enforcement.

- Unified LLM + MCP gateway: Bifrost is the only option that handles both LLM provider routing and MCP tool orchestration in a single binary. Kong runs its LLM gateway and MCP gateway side by side within the same platform. Cloudflare separates these into AI Gateway and MCP Server Portals. Docker and MCPX focus exclusively on MCP.

For teams where MCP token costs are the dominant concern, the presence or absence of Code Mode is the deciding factor. Anthropic's engineering team documented that code execution patterns can reduce context from 150,000 tokens to 2,000 tokens for complex workflows. At enterprise scale with hundreds of agent runs per day, this is the difference between manageable and unsustainable inference spend.

The LLM Gateway Buyer's Guide provides a detailed capability matrix for teams evaluating these options across governance, performance, and MCP support dimensions.

Start Managing MCP Costs with Bifrost

For teams that need the deepest MCP cost optimization available today, Bifrost delivers 92% token reduction through Code Mode, unified LLM and MCP cost visibility, per-tool cost tracking, and enterprise governance in a single open-source deployment. To see how Bifrost's MCP gateway can reduce your agent infrastructure costs, book a demo with the Bifrost team.