Best AI Gateway for MCP Tool-Level Observability

Bifrost provides MCP tool-level observability with per-execution audit logs, per-tool cost tracking, Prometheus metrics, OpenTelemetry tracing, and a native Datadog connector in a single AI gateway.

When an AI agent connected to a dozen MCP servers makes a wrong tool call, most teams cannot trace back through the execution chain to find where it went wrong. When costs spike after a deployment, they cannot pinpoint which tools are burning budget. When a compliance audit asks what tools a specific user's agent invoked last Tuesday, they have no structured answer. This is the MCP observability gap, and it is widening as AI agents move from prototypes to production. Bifrost, the open-source AI gateway by Maxim AI, closes this gap with tool-level observability that captures every MCP execution with full metadata, tracks costs at the tool level, and exports telemetry to the monitoring stacks teams already use.

Gartner predicts that 40% of enterprise applications will integrate task-specific AI agents by the end of 2026. With the EU AI Act's traceability requirements taking effect in August 2026, the ability to log every agent execution with full traceability is moving from good engineering practice to legal requirement for teams building AI agents that make consequential decisions.

Why MCP Observability Is Different from LLM Monitoring

Traditional LLM monitoring captures prompts, responses, tokens, latency, and cost at the API level. That is useful for understanding model behavior, but it misses the critical layer for agents: tool execution.

An AI agent's failure rarely manifests as a single bad model response. It manifests as a chain of decisions: the model selects a tool, passes parameters, receives a result, decides the next action, and repeats. The most common failure modes in production agent systems are:

- Wrong tool selection: The model picks the right category of tool but the wrong specific one, especially when the tool catalog is large and definitions overlap.

- Incorrect parameters: The model selects the correct tool but passes wrong or incomplete arguments.

- Ordering failures: Multi-step workflows execute tools in the wrong sequence, corrupting state for subsequent steps.

- Silent cost escalation: Tools that call paid external APIs (search, enrichment, code execution) accumulate costs that never appear in LLM token metrics.

Without tool-level observability, teams cannot answer basic operational questions: Which tools did this agent call? In what order? With what arguments? What came back? Which virtual key triggered the execution? What was the total cost including external API charges?

An analysis of the MCP observability landscape found that most existing approaches fall into SDK-based instrumentation (which requires code changes in every application) or request-level logging (which captures prompts and responses but not the tool execution chain between them). Neither approach provides the protocol-native, tool-level visibility that production MCP deployments require.

What MCP Tool-Level Observability Requires

Before evaluating specific gateways, teams should define what complete MCP observability looks like:

- Per-execution tool logs: Every tool call captured as a first-class log entry with tool name, source MCP server, arguments passed, result returned, latency, the virtual key that triggered it, and the parent LLM request that initiated the agent loop

- Per-tool cost tracking: Token costs and external API costs tracked separately at the tool level, not aggregated at the request level

- Consumer attribution: Every execution attributed to a specific consumer (user, team, or customer integration) through virtual keys or equivalent identity mechanisms

- Content control: Ability to disable content logging per environment while still capturing tool name, server, latency, and status for operational monitoring

- Export to existing stacks: Native integration with Prometheus, OpenTelemetry, Datadog, Grafana, and other platforms teams already use, rather than requiring a proprietary dashboard

- Compliance-grade audit trails: Immutable logs that satisfy SOC 2, GDPR, HIPAA, and ISO 27001 requirements

- Real-time monitoring: Live dashboards for token consumption, tool usage patterns, and latency breakdowns across all agent sessions

How Bifrost Provides MCP Tool-Level Observability

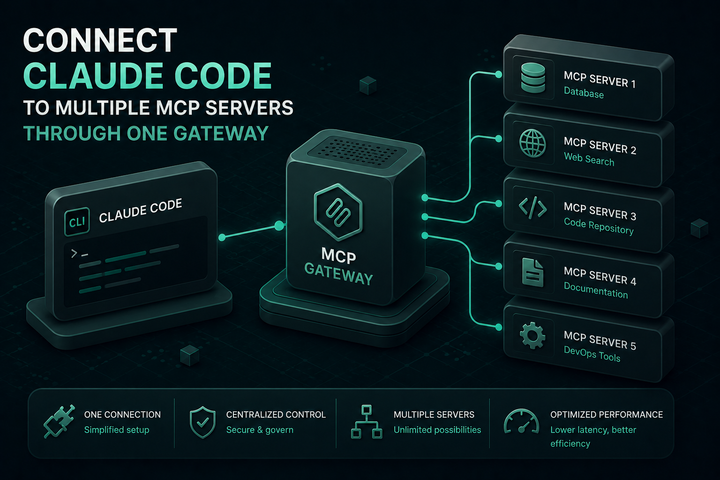

Bifrost operates as both an MCP client and server, which means every tool invocation between AI agents and MCP servers flows through the gateway. This architectural position gives Bifrost complete visibility into tool execution without requiring SDK instrumentation in individual applications.

First-Class Tool Execution Logging

Every MCP tool execution in Bifrost is a first-class log entry. For each call, the gateway captures:

- Tool name and the MCP server it came from

- Arguments passed to the tool

- Result returned from the tool

- Execution latency

- The virtual key that triggered the execution

- The parent LLM request that initiated the agent loop

Teams can pull up any agent run and trace exactly which tools it called, in what order, and what came back. Filtering by virtual key enables auditing what a specific team or customer has been running. For environments where content sensitivity is a concern, content logging can be disabled while still capturing tool name, server, latency, and status for operational metrics.

Per-Tool Cost Tracking

MCP costs are not just token costs. If tools call paid external APIs (search engines, data enrichment services, code execution platforms), each invocation has a price that standard LLM monitoring never captures.

Bifrost tracks cost at the tool level using a pricing configuration defined per MCP client. These costs appear in logs alongside LLM token costs, providing a complete picture of what each agent run actually cost, not just the model portion. When finance asks why the AI bill doubled last month, teams can trace the increase to specific tools, specific consumers, and specific agent workflows.

Prometheus Metrics

Bifrost exposes a native Prometheus metrics endpoint for scraping, covering:

- Token usage (input, output, cache creation, cache read) per provider and model

- Cost attribution per virtual key, team, and provider

- Latency distributions across all requests

- Cache hit rates for semantic caching

- Error rates by provider and status code

- MCP tool invocation counts and latencies

Metrics are collected asynchronously with zero impact on request latency. Teams using Grafana, Prometheus Alertmanager, or any Prometheus-compatible stack can build dashboards and alerts on MCP tool usage alongside their existing infrastructure metrics.

OpenTelemetry Integration

Bifrost's OpenTelemetry (OTLP) integration sends span-level data to any OTLP-compatible backend: Grafana, New Relic, Honeycomb, Jaeger, or Zipkin. This enables distributed tracing across the full agent execution path, from the initial user request through LLM inference to tool execution and back.

For teams building on Anthropic's context engineering recommendations, which emphasize treating context as a finite resource with diminishing returns, OpenTelemetry traces provide the data needed to identify which tools contribute meaningful context and which consume tokens without proportional value.

Native Datadog Connector

Bifrost's Datadog connector pushes APM traces, LLM observability data, and cost metrics directly into Datadog dashboards. MCP tool execution data appears alongside application metrics, infrastructure health, and CI/CD pipeline data in the same dashboards engineering teams already monitor. This eliminates the need for a separate observability stack for AI agent workflows.

Built-In Dashboard

For teams that need immediate visibility without configuring external monitoring, Bifrost's built-in web interface at http://localhost:8080/logs provides real-time monitoring of token consumption, tool usage patterns, error rates, and latency breakdowns across all agent sessions. This is particularly useful during development and debugging before production monitoring stacks are configured.

Automated Log Exports

For compliance and long-term cost analysis, Bifrost supports automated log exports to storage systems and data lakes. Every agent interaction is recorded with full metadata, providing the audit trail that compliance teams need.

Observability Combined with Governance and Cost Control

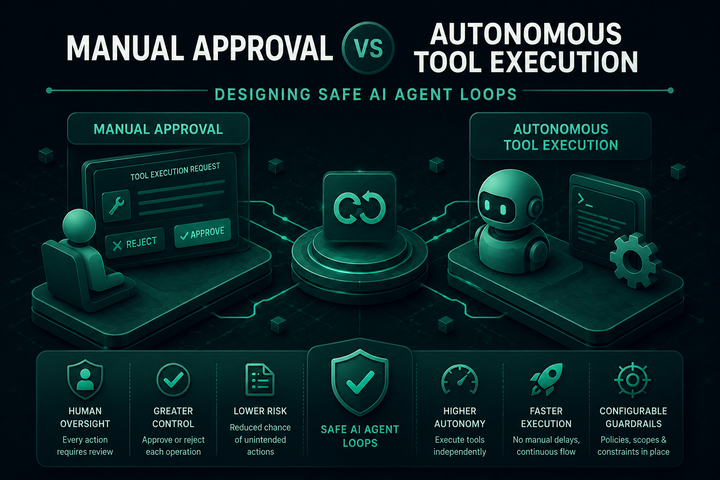

Observability without governance produces dashboards. Governance without observability produces blind enforcement. Bifrost provides both in a single gateway.

Tool filtering controls which tools each consumer can access. Fewer tools in context means less noise in observability data and lower token costs. When a virtual key only has access to five tools instead of fifty, debugging tool selection errors becomes straightforward.

**Rate limits and budget controls** prevent cost anomalies before they appear in dashboards. Set spending ceilings per virtual key, team, or customer. When a budget is reached, requests are blocked automatically.

Code Mode reduces the volume of tool execution data that needs to be processed. Instead of logging 6 LLM turns with 150+ tool definitions per workflow, Code Mode collapses this to 3 to 4 turns with approximately 50 tokens in tool definitions. The 92% token reduction at 500 tools also means 92% less observability noise from tool definition overhead.

Audit logs provide immutable records for SOC 2, GDPR, HIPAA, and ISO 27001 compliance. The logging pipeline is designed to capture every tool suggestion, approval, and execution with full metadata.

For a detailed capability matrix across governance, observability, and MCP support, see the LLM Gateway Buyer's Guide.

Setting Up MCP Tool-Level Observability with Bifrost

# Start Bifrost

npx -y @maximhq/bifrost

From the Bifrost dashboard at http://localhost:8080:

- Add MCP servers: Navigate to the MCP section. Choose connection type (HTTP, SSE, or STDIO), enter the endpoint, and Bifrost discovers tools automatically with periodic refresh.

- Create virtual keys: Issue keys per consumer with tool-level access control. Every tool execution will be attributed to the key that triggered it.

- Configure telemetry exports: Enable Prometheus metrics at the

/metricsendpoint, configure OpenTelemetry export to your tracing backend, or activate the Datadog connector for native APM integration. - Enable Code Mode (optional): For clients connecting to 3+ MCP servers, toggle Code Mode to reduce token overhead and simplify observability data.

- Connect agent clients: Point Claude Code, Cursor, Gemini CLI, or any MCP-compatible client to Bifrost's

/mcpendpoint.

Bifrost adds only 11 microseconds of overhead at 5,000 requests per second. The observability pipeline runs asynchronously, adding zero latency to tool execution. Bifrost is open source under Apache 2.0 and available on GitHub, with enterprise features including clustering, in-VPC deployment, and custom plugins.

Get Complete MCP Observability with Bifrost

Production AI agent teams need an AI gateway that provides MCP tool-level observability, not just LLM request logging. Bifrost delivers per-execution tool logs with full metadata, per-tool cost tracking that covers both tokens and external APIs, native export to Prometheus, OpenTelemetry, and Datadog, and compliance-grade audit trails, all in a single open-source deployment. To see how Bifrost's MCP gateway can bring full observability to your agent infrastructure, book a demo with the Bifrost team.