AI Governance Best Practices for Enterprise Teams

A practical guide to AI governance best practices for enterprise teams, covering policy, controls, infrastructure, and continuous oversight at scale.

AI governance best practices for enterprise teams have moved from aspirational guidance to operational necessity. With the EU AI Act imposing obligations on high-risk AI systems, the NIST AI Risk Management Framework setting the de facto baseline in the United States, and ISO/IEC 42001 defining a global management system standard, enterprise teams now operate inside a layered compliance environment. The stakes are concrete: a recent Deloitte analysis found that while 87% of executives claim to have AI governance frameworks, fewer than 25% have fully operationalized them, and a 2025 LayerX enterprise data security report showed that 67% of ChatGPT access happens through unmanaged accounts, with single sign-on adoption effectively zero in many organizations.

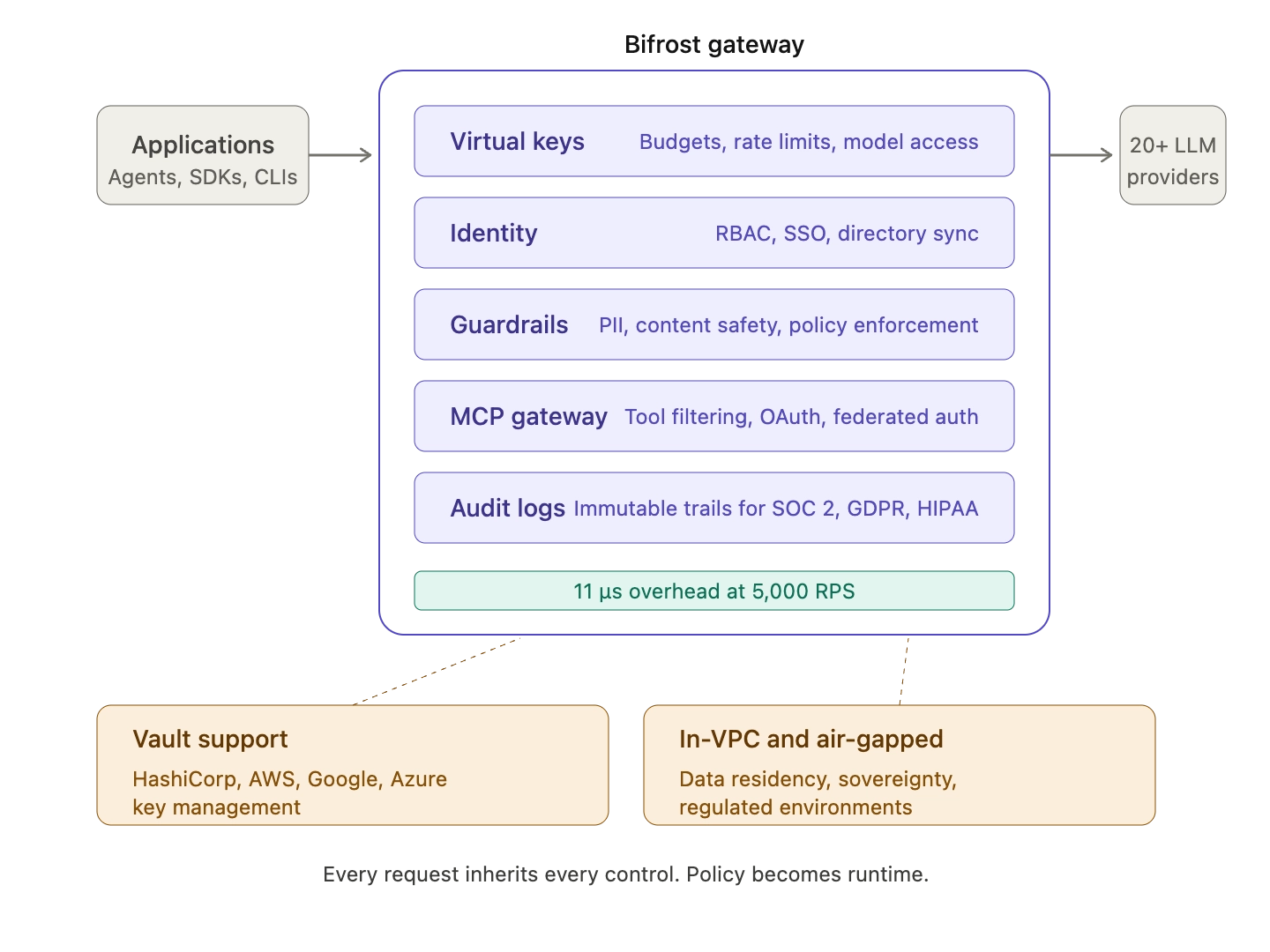

This guide outlines AI governance best practices for enterprise teams, organized around the policy, organizational, and infrastructure layers that determine whether governance actually holds in production. Bifrost, the open-source AI gateway built by Maxim AI, is referenced where infrastructure-level controls map directly to governance outcomes.

What AI Governance Means for Enterprise Teams

Enterprise AI governance is the set of policies, processes, controls, and infrastructure that ensure AI systems are used responsibly, lawfully, and in alignment with organizational risk tolerance. It is not a single document or a one-time risk assessment. The NIST AI RMF describes governance as the function that establishes a risk culture, while MAP, MEASURE, and MANAGE operationalize that culture across the AI lifecycle.

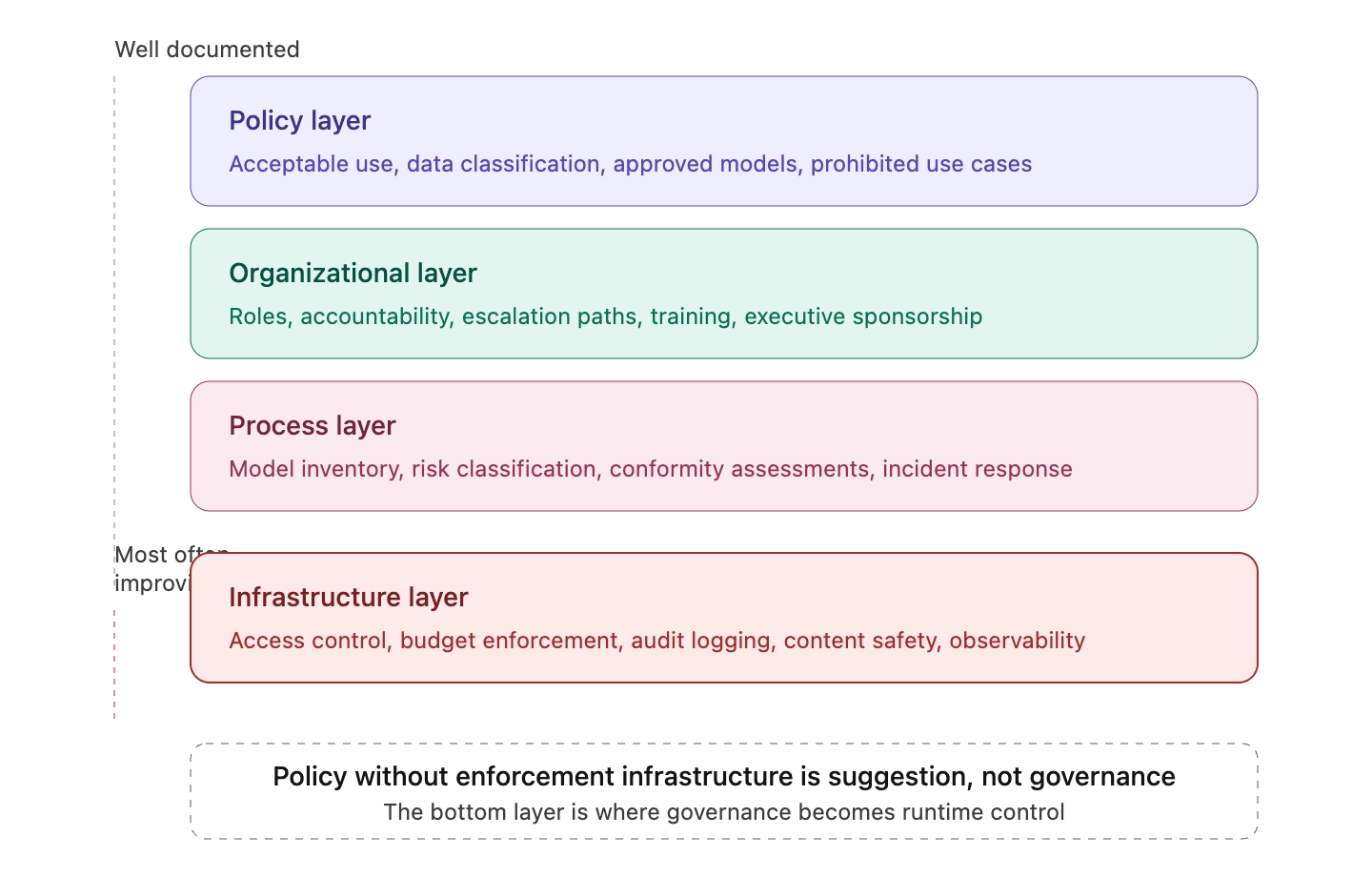

For enterprise teams, governance typically spans four layers:

- Policy layer: acceptable use, data classification, approved models, prohibited use cases

- Organizational layer: roles, accountability, escalation paths, training, executive sponsorship

- Process layer: model inventory, risk classification, conformity assessments, incident response

- Infrastructure layer: access control, budget enforcement, audit logging, content safety, observability

Most governance failures happen because the first three layers are well documented while the fourth is improvised. Policy without enforcement infrastructure is suggestion, not governance.

Best Practice 1: Build a Current AI Bill of Materials

A current inventory of every AI system, model, dataset, and third-party integration is the foundation of every other control. NIST RMF GOVERN 1.6 requires mechanisms to inventory AI systems and resource them according to organizational risk priorities. ISO/IEC 42001 makes a similar demand through its AI system impact assessment requirements.

The inventory should capture, at minimum:

- System name, owner, and business purpose

- Underlying models and providers (including general-purpose foundation models)

- Training and operational data sources

- Risk classification (NIST RMF tier or EU AI Act category)

- Deployment status, version, and decommissioning plan

A 2025 industry survey found that over half of organizations lack systematic inventories of AI systems currently in production, which makes risk classification and conformity assessment impossible. Without an inventory, governance teams cannot answer the question that every regulator and auditor eventually asks: what AI systems do you run, and who is accountable for each one.

Best Practice 2: Map Governance to Recognized Frameworks

Enterprise teams should anchor their program to established frameworks rather than build governance from first principles. The three most relevant references for 2026:

- NIST AI RMF 1.0 and the Generative AI Profile (NIST AI 600-1), which extend RMF guidance to generative and foundation models

- ISO/IEC 42001, the first global management system standard for AI, providing a certifiable framework analogous to ISO 27001 for information security

- EU AI Act (Regulation 2024/1689), which classifies AI systems by risk and imposes specific obligations on high-risk and general-purpose AI providers and deployers

These frameworks overlap significantly. Most ISO 42001 controls map to NIST RMF subcategories, and both inform the technical documentation expectations of the EU AI Act. Building one program that satisfies all three is more efficient than maintaining parallel structures.

Best Practice 3: Address Shadow AI Before It Becomes the Norm

Shadow AI, the unauthorized use of AI tools and APIs outside of governance oversight, is now the single largest source of enterprise AI risk. According to a 2025 Komprise survey reported by CIO.com, 90% of IT directors at large enterprises are concerned about shadow AI from a privacy and security standpoint. ISACA's 2025 analysis of the shadow AI problem identifies four control categories that enterprise teams should implement together:

- Discovery: network monitoring, DNS analysis, and DLP tools to identify unsanctioned AI use

- Policy: tiered classifications (approved, limited use, prohibited) tied to data sensitivity

- Access and identity controls: identity and access management integrated with AI tools

- Approved alternatives: governed AI platforms that match the capability employees were seeking

The last point matters most. Blanket bans drive AI use underground; governed alternatives bring it back into oversight. An internal AI gateway that exposes approved models behind a single endpoint, with logging and budget controls, removes the incentive for engineers to provision personal API keys.

Best Practice 4: Centralize Access Control Through an AI Gateway

The infrastructure pattern that makes the rest of governance enforceable is the AI gateway: a single layer between applications and LLM providers that mediates every request. Bifrost provides this layer as an open-source, self-hosted gateway compatible with 20+ LLM providers through a unified OpenAI-compatible API.

Inside Bifrost, virtual keys function as the primary governance entity. Each virtual key carries:

- Per-consumer access permissions (which models, which providers)

- Budget caps with automatic resets and multi-tier cascading (customer, team, virtual key)

- Token and request rate limits to prevent runaway usage

- MCP tool filtering for agentic workflows

- Active or inactive status that platform teams can toggle instantly

This single primitive collapses several governance requirements into a controllable layer. Instead of relying on every engineering team to enforce model whitelists, spend caps, and rate limits inside their own application code, platform teams enforce them once at the gateway. Bifrost's governance resource page details how hierarchical cost tracking cascades from customer to team to virtual key, with a single exhausted budget at any tier blocking the entire request.

Best Practice 5: Enforce Content Safety and Guardrails at the Infrastructure Layer

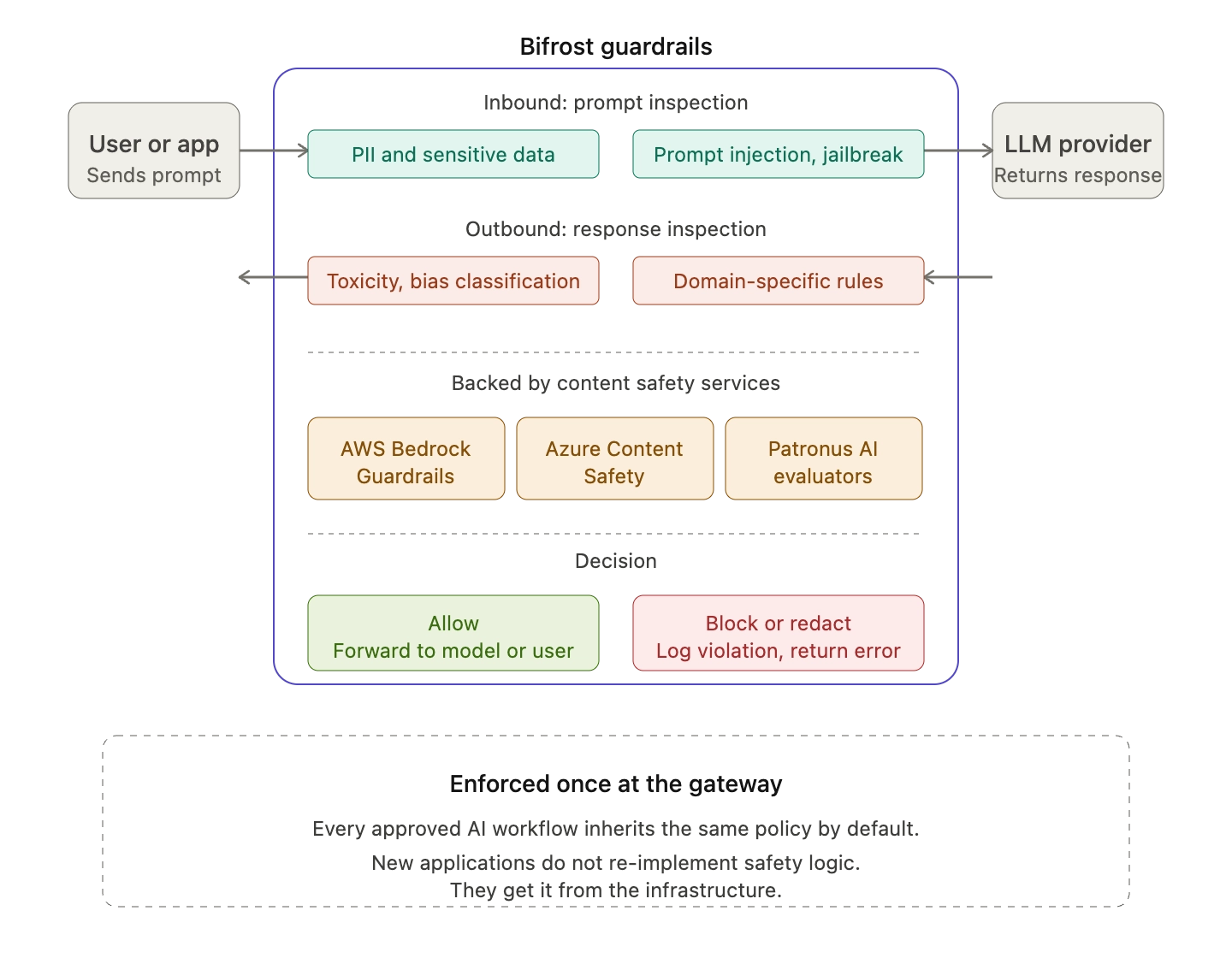

Policy statements about acceptable content, PII handling, and prohibited outputs are necessary but insufficient. Enforcement requires runtime controls that inspect every prompt and response. Bifrost's enterprise guardrails integrate content safety services from AWS Bedrock Guardrails, Azure Content Safety, and Patronus AI to automatically detect and block unsafe outputs before they reach end users or downstream systems.

Effective guardrail policies typically cover:

- PII and sensitive data detection in both prompts and responses

- Prompt injection and jailbreak detection

- Output toxicity, bias, and policy violation classification

- Domain-specific content rules (medical, financial, legal disclaimers)

Because Bifrost enforces these at the gateway, every approved AI workflow inherits the same policy by default. New applications do not need to re-implement safety logic; they get it from the infrastructure.

Best Practice 6: Govern Agentic and MCP-Connected AI Systems

The fastest-growing governance gap is in agentic AI: autonomous agents that chain tool calls, access multiple systems, and execute actions without human review for each step. The Cloud Security Alliance and others have begun publishing extensions to NIST RMF specifically for agentic deployments, including the NIST AI RMF Agentic Profile released in early 2026, which proposes autonomy tier classification, runtime behavioral governance, and delegation chain accountability.

Bifrost's MCP gateway centralizes Model Context Protocol connections, applying access control, OAuth authentication, and tool filtering across every agent and tool server. Specific governance controls include:

- Tool filtering per virtual key, so agents only see the tools they are authorized to use

- OAuth 2.0 with PKCE and automatic token refresh for downstream systems

- Federated authentication that turns existing enterprise APIs into governed MCP tools without rewriting them

- Code Mode execution that reduces token consumption by 50% and cuts attack surface by orchestrating tools through code rather than ad-hoc model calls

For deeper detail on MCP gateway governance and cost outcomes, see the post on Bifrost's MCP gateway, access control, and cost governance.

Best Practice 7: Maintain Immutable Audit Logs and Continuous Monitoring

NIST RMF MEASURE and MANAGE functions, ISO 42001 clauses on monitoring, and EU AI Act Article 12 (record-keeping for high-risk systems) all converge on the same requirement: every AI request, decision, and policy action must be recorded in a tamper-evident log and continuously monitored.

Bifrost's enterprise audit logs capture immutable trails suitable for SOC 2, GDPR, HIPAA, and ISO 27001 audits, and the gateway's observability layer exports OpenTelemetry traces and Prometheus metrics to existing monitoring stacks. What enterprise teams should monitor continuously:

- Request volume, latency, and error rates per virtual key and per provider

- Budget consumption against policy thresholds, with alerts before breach

- Guardrail violations, blocked outputs, and policy override frequency

- Anomalous usage patterns suggesting credential misuse or shadow AI re-emergence

Continuous monitoring is what turns governance from a static document into a living system. The NIST RMF playbook explicitly frames the four functions as an iterative loop, not a linear checklist.

Best Practice 8: Plan for Compliance Deadlines Now

Enterprise governance programs should be calibrated against concrete regulatory deadlines, not generalized risk anxiety. The most significant near-term milestones:

- August 2, 2026: EU AI Act high-risk system obligations begin, with potential deferral to December 2027 under the Digital Omnibus proposal currently in trilogue

- 2026 and ongoing: ISO/IEC 42001 certification becoming a procurement requirement in several enterprise sectors

- Ongoing: NIST AI RMF updates, including the Generative AI Profile and the emerging Agentic Profile

Enterprise teams in regulated industries should also align governance with sector-specific requirements. The Bifrost industry pages for financial services and banking and healthcare and life sciences outline compliance-aligned deployment patterns for in-VPC and air-gapped environments.

Operationalizing AI Governance with Bifrost

The pattern that emerges across NIST RMF, ISO 42001, and the EU AI Act is consistent: governance must be enforceable at the point of request, not just documented in policy. Bifrost provides that enforcement layer with:

- Virtual key governance with hierarchical budgets, rate limits, and model access control

- RBAC, SSO with Okta and Microsoft Entra, and directory synchronization for enterprise identity integration

- Vault support for HashiCorp Vault, AWS Secrets Manager, Google Secret Manager, and Azure Key Vault

- In-VPC and air-gapped deployments for data residency and sovereignty requirements

- Native guardrails, MCP gateway controls, and immutable audit logs

- 11 microseconds of overhead at 5,000 RPS, so governance does not become a performance tax

Independent Bifrost performance benchmarks and the LLM Gateway Buyer's Guide provide capability detail for teams evaluating gateway-based governance against alternative architectures.

Start Building Enterprise AI Governance

AI governance best practices for enterprise teams come down to a single principle: policy is only as effective as the infrastructure that enforces it. Frameworks like NIST AI RMF, ISO/IEC 42001, and the EU AI Act define the outcomes regulators and auditors expect; the AI gateway pattern is how enterprise teams turn those outcomes into runtime controls.

To see how Bifrost can serve as the governance enforcement layer for your AI workloads, book a demo with the Bifrost team.