Best Open Source MCP Gateways in 2026

The best open source MCP gateways of 2026 compared: performance, governance, security, and enterprise readiness for AI teams scaling agentic workflows.

The Model Context Protocol (MCP) has crossed 97 million monthly downloads and achieved adoption across every major AI vendor. What started as a developer convenience has become production-critical infrastructure. When Cisco announced dedicated MCP security tooling at RSA Conference 2026, the signal was clear: the "this is a dev tool" phase is over.

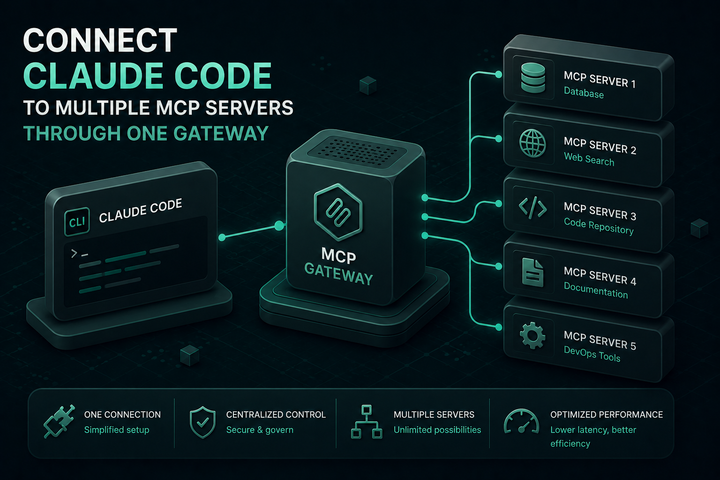

For AI engineering teams, the question is no longer whether to use MCP, but how to govern it at scale. Without a gateway, every agent manages its own server connections and credentials, creating fragmented, unauditable, and insecure tool access. An open source MCP gateway centralizes authentication, enforces access control, logs every tool invocation, and provides a single policy enforcement point across your entire agent fleet.

This post compares the five best open source MCP gateways available in 2026, evaluated on performance, security model, governance depth, and production readiness.

What to Look for in an Open Source MCP Gateway

Before comparing options, it helps to establish the criteria that actually matter for production AI teams:

- Access control depth: Can the gateway enforce permissions at the server level, the tool level, and the parameter level? Agent over-privilege is one of the most common governance failures in agentic deployments.

- Authentication: Support for OAuth 2.0/2.1, token refresh, and integration with enterprise identity providers (Okta, Entra ID).

- Audit trails: Immutable, queryable logs of every tool invocation for compliance with SOC 2, HIPAA, GDPR, and ISO 27001.

- Performance overhead: Latency added by the gateway in p99 conditions. For high-throughput agent workflows, this is non-trivial.

- Deployment flexibility: Docker, Kubernetes, binary deployment. Whether you can run it in-VPC without external dependencies.

- LLM routing integration: Whether the gateway also handles model routing and failover, or whether you need a separate AI gateway alongside it.

1. Bifrost

Best for teams that need a combined LLM gateway and MCP gateway in a single deployment.

Bifrost is a high-performance, open-source AI gateway built in Go by Maxim AI. It is the only tool on this list that handles both LLM routing and MCP tool orchestration from a single binary, eliminating the need to maintain two separate infrastructure components.

Bifrost acts as both an MCP client and an MCP server: it connects to external tool servers over STDIO, HTTP, or SSE, aggregates their tools, and exposes them to external clients like Claude Desktop, Cursor, and Claude Code through a single /mcp endpoint. At the same time, it routes model requests across 20+ LLM providers with automatic failover and load balancing.

Key MCP capabilities:

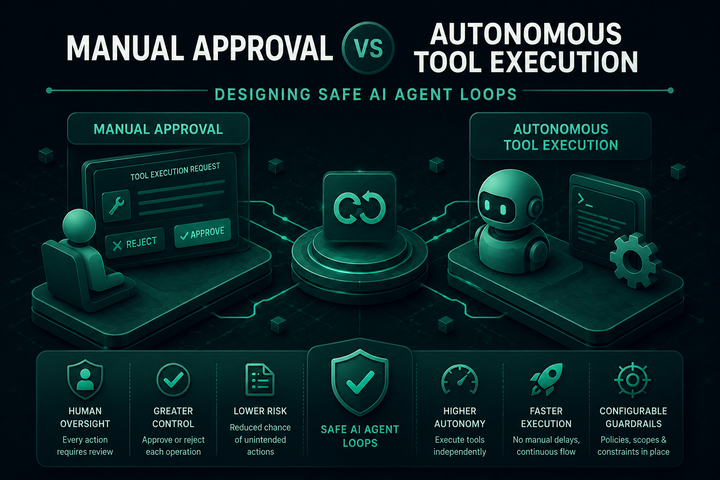

- Agent Mode: Autonomous tool execution with configurable auto-approval policies, so agents can complete multi-step workflows without per-step human intervention.

- Code Mode: Instead of calling tools directly, Bifrost's Code Mode has the AI write Python to orchestrate multiple tools in a single execution, reducing token consumption by approximately 50% and cutting latency by approximately 40% compared to sequential tool calls.

- OAuth 2.0 authentication: Full OAuth flow with PKCE and automatic token refresh for connecting to authenticated MCP servers.

- Tool filtering per virtual key: Control exactly which MCP tools each team, consumer, or workflow can access, enforced at the gateway level.

- Federated auth for enterprise APIs: Transform existing internal APIs into MCP tools without writing new server code, using Bifrost's federated authentication layer.

- Tool hosting: Register and expose custom tools through Bifrost's MCP server without deploying a separate MCP server process.

On the governance side, Bifrost uses virtual keys as the primary access control entity: each key carries its own budget, rate limits, and permitted tool set. Audit logs provide immutable trails for compliance. Enterprise deployments support HashiCorp Vault, AWS Secrets Manager, Google Secret Manager, and Azure Key Vault for credential management.

Performance benchmarks show Bifrost adds 11 microseconds of overhead per request at 5,000 requests per second sustained, making it the lowest-latency option in this comparison.

License: Apache 2.0

Language: Go

Deployment: Binary, Docker, Kubernetes

2. Docker MCP Gateway

Docker's open-source MCP Gateway runs each MCP server in its own isolated Docker container with cryptographically signed images, restricted network access, and built-in secrets injection. It manages the full server lifecycle: when an AI application requests a tool, the gateway starts the appropriate container if it is not already running, injects credentials, and proxies the request.

Docker's approach offers strong isolation guarantees. Each server runs in a separate container with restricted privileges, which limits blast radius if a tool server is compromised. The gateway integrates natively with Docker Desktop's MCP Toolkit, giving developers a low-friction path to getting started locally.

The primary constraint is that Docker MCP Gateway is designed primarily for developer-local and container-native environments rather than multi-tenant enterprise governance. Cross-team access control and organizational policy enforcement require additional tooling on top. It also does not handle LLM routing, so teams running both LLM traffic and MCP tool traffic need a separate AI gateway.

License: Apache 2.0

Language: Go

Deployment: Docker Desktop, Docker Engine binary

3. IBM ContextForge (MCP Context Forge)

IBM's ContextForge is an open-source registry and proxy that federates MCP servers, A2A agents, and REST/gRPC APIs behind a single endpoint. It provides centralized governance, dynamic tool discovery, and OpenTelemetry-based observability for heterogeneous AI infrastructure.

ContextForge's distinguishing feature is protocol translation: it can convert REST and gRPC services into MCP-compatible tool definitions, allowing teams to expose existing internal APIs to AI agents without rewriting them as MCP servers. It supports 40+ plugins for additional transports and integrations.

Key capabilities include an Admin UI for real-time configuration and log monitoring, Redis-backed caching for multi-cluster federation, built-in retries and rate limiting, and OpenTelemetry integration with Phoenix, Jaeger, and Zipkin. It deploys via PyPI or Docker and scales to Kubernetes multi-cluster environments.

ContextForge is built in Python rather than Go, which produces higher per-request overhead than Bifrost. It is also a newer project than some others on this list, and enterprise governance features (RBAC, SSO integration, audit trails) are still maturing compared to more established options.

License: Apache 2.0

Language: Python

Deployment: PyPI, Docker, Kubernetes

4. Microsoft MCP Gateway

Microsoft's open-source MCP Gateway is a reverse proxy and management layer for MCP servers designed specifically for Kubernetes environments. It provides session-aware stateful routing (ensuring all requests with a given session ID consistently reach the same MCP server instance), lifecycle management via a control plane, and Azure Entra ID (AAD) integration for authentication.

The gateway introduces the concept of Adapters as logical representations of MCP servers managed under a single /adapters scope. A Tool Gateway Router, itself an MCP server, acts as an intelligent router directing tool execution requests to the appropriate registered tool servers based on tool definitions.

Microsoft's gateway is a strong fit for organizations already running Azure infrastructure and Kubernetes-native workloads, where Entra ID authentication and RBAC are the standard access control model. It is not a general-purpose MCP gateway for teams outside the Azure ecosystem, and it does not provide LLM routing capabilities.

License: MIT

Language: Go

Deployment: Kubernetes

5. Obot MCP Gateway

Obot is an open-source MCP gateway and AI platform focused on organizational MCP server management. It provides a searchable catalog of available MCP servers with IT-verified trust levels, role-based access control, audit logging, and Kubernetes deployment. Users generate per-agent URLs to connect MCP servers to their preferred clients, including VS Code, Claude Desktop, and GitHub Copilot.

Obot's differentiator is its catalog approach: rather than requiring teams to configure individual server connections, it provides a managed directory that IT can curate and approve. This reduces the operational burden of onboarding new MCP servers and gives IT visibility into what agents are connecting to.

The open-source edition includes the core gateway, RBAC, and audit logging. Features like Okta and Entra ID integration are part of Obot Enterprise Edition.

License: Apache 2.0

Language: Go

Deployment: Kubernetes, Docker

How the Five Compare

| Capability | Bifrost | Docker | IBM ContextForge | Microsoft | Obot |

|---|---|---|---|---|---|

| MCP client + server | Yes | Client only | Client only | Client only | Client only |

| LLM routing | Yes | No | No | No | No |

| Code Mode (token reduction) | Yes | No | No | No | No |

| OAuth 2.0 | Yes | No | Yes (partial) | Yes (Entra) | Enterprise only |

| Tool-level RBAC | Yes | No | Partial | Yes | Yes |

| Audit logs | Yes | Partial | Yes | Yes | Yes |

| Vault integration | Yes | No | No | No | No |

| Protocol translation (REST/gRPC) | No | No | Yes | No | No |

| Performance overhead | 11 µs p99 | Container startup | Higher (Python) | Not published | Not published |

Choosing the Right Open Source MCP Gateway

For teams building production AI agents that need both LLM routing and MCP tool orchestration, operating at scale, Bifrost offers the most complete architecture: a single open-source AI gateway that handles model traffic, tool orchestration, governance, and observability without additional infrastructure components.

To see how Bifrost can simplify your AI gateway infrastructure, book a demo with the Bifrost team.