Is AI Distillation Theft or Just How Knowledge Evolves?

Last month Anthropic published something unusual: a detailed accusation. Three Chinese AI labs, DeepSeek, Moonshot AI, and MiniMax, had collectively generated over 16 million exchanges with Claude through ~24,000 fraudulent accounts. They bypassed regional access controls using commercial proxy networks. They targeted specific, high-value capabilities. Anthropic called it a "distillation attack." A coordinated, industrial-scale heist of model intelligence.

The framing is compelling. It's also incomplete.

Because if you actually understand how modern LLMs are trained, how capabilities get into models in the first place, the line between "illicit extraction" and "how the whole field operates" becomes genuinely difficult to draw. This post tries to draw it honestly.

What Distillation Actually Is (And Isn't)

The word "distillation" is doing heavy lifting in this debate, and it's worth unpacking precisely.

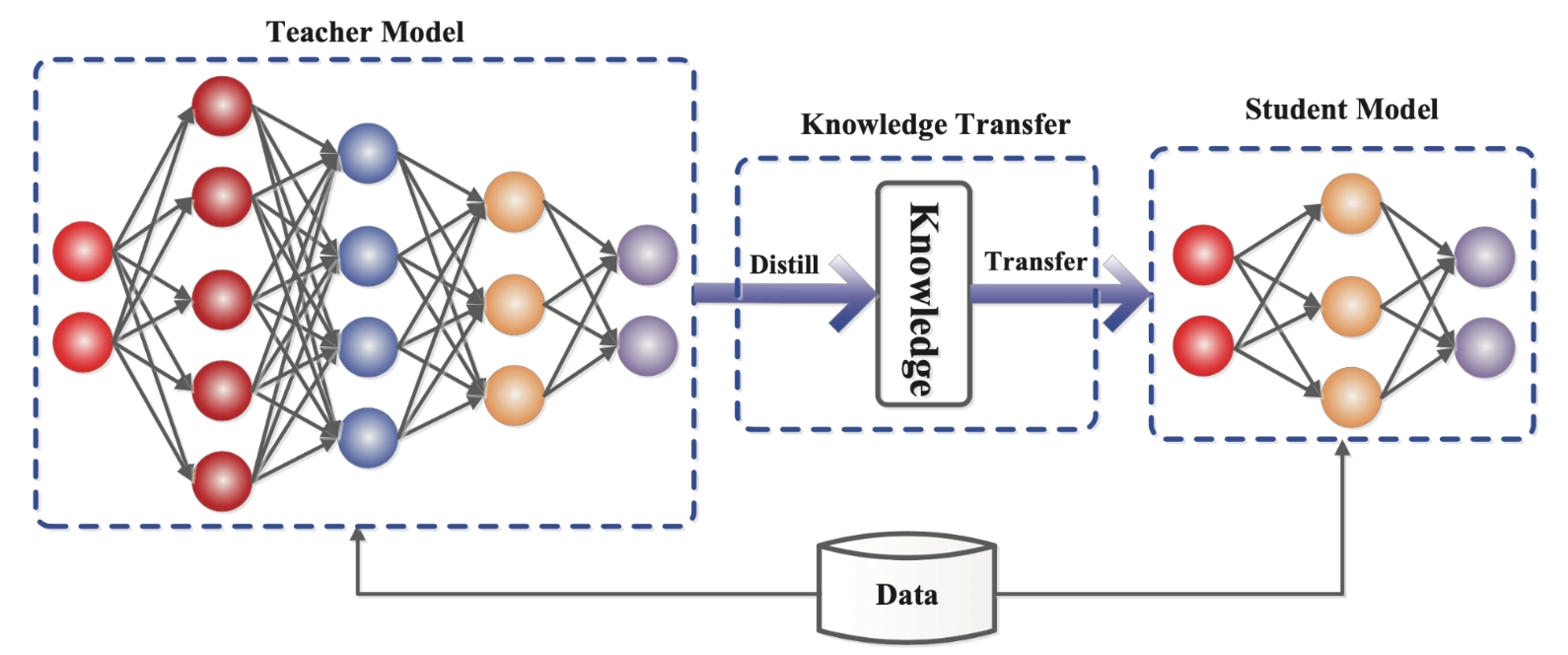

The original technical definition, knowledge distillation (Hinton, Vinyals & Dean, 2015), is specific: a student model is trained to match the full output distribution (the logits) of a teacher model, not just its predicted tokens. This soft-label training transfers more signal than hard labels because the teacher's probability mass over wrong answers encodes semantic relationships. It's richer supervision.

But here's the thing: you can't do classic KD from an API. APIs return sampled tokens, not logit vectors. Without access to the teacher's raw probability distributions, you're not doing knowledge distillation in the technical sense. You're doing something closer to supervised fine-tuning (SFT) on synthetic data: collecting (prompt, completion) pairs from a stronger model and training a weaker one to imitate them via standard cross-entropy loss.

That distinction matters because it affects what actually transfers:

- Classic KD transfers nuanced distributional knowledge: the "dark knowledge" in near-miss probabilities. It's efficient and powerful.

- SFT on synthetic data transfers behavioral patterns: response style, reasoning structure, format, but only what's visible in the sampled output. You get the shadow of the model's reasoning, not the thing itself.

So when we talk about Chinese labs "distilling Claude," we mean: they collected Claude's outputs on carefully chosen prompts and used those as training signal. That's a real capability transfer mechanism. But it's categorically different from having internal API access to Claude's weights or logits.

How the Attacks Actually Worked

Anthropic's report is quite specific about the attack mechanics, and that specificity is revealing.

The access layer: hydra clusters. The labs built infrastructure around the problem. Commercial proxy services operate what Anthropic calls "hydra cluster" architectures: large networks of fraudulent API accounts that distribute traffic to avoid rate limiting and behavioural detection. One network managed 20,000+ accounts simultaneously, mixing distillation traffic with legitimate-looking requests to pollute the signal. When Anthropic banned one account, another took its place. Some may call it opportunistic abuse, some engineered resilience.

The prompt layer: targeted capability extraction. What distinguishes a distillation attack from normal API usage is the statistical pattern, not a single prompt. A prompt like "You are an expert data analyst. Deliver data-driven insights grounded in complete and transparent reasoning" is innocuous in isolation. Sent 50,000 times across 500 coordinated accounts, all targeting the same narrow capability, the pattern becomes unmistakable. Volume concentrated in a few capability areas + highly repetitive structure + content that maps directly onto model training objectives = distillation fingerprint.

The most technically interesting technique: chain-of-thought elicitation. DeepSeek's operation included prompts instructing Claude to "imagine and articulate the internal reasoning behind a completed response and write it out step by step." This is clever. Reasoning traces aren't exposed by default in Claude's API but you can prompt the model to generate post-hoc reasoning as part of its response. The output isn't the same as an internal chain-of-thought, but it's a reasonable approximation of structured reasoning data, which is exactly what you'd want if you're training a reasoning-capable model via SFT. This is roughly analogous to what OpenAI accused DeepSeek of doing with their o1 reasoning traces.

MiniMax's real-time pivot. The most operationally sophisticated behaviour Anthropic documented was MiniMax's response to a new Claude release mid-campaign: within 24 hours, they redirected nearly half their traffic to extract capabilities from the latest model. That's not a team of rogue researchers. That's a coordinated, monitored operation with someone watching the dashboard.

The Strongest Technical Argument Against Distillation Being Decisive

Here's where the "theft" framing starts to strain: the most important part of training a frontier model today cannot be distilled from an API.

Modern top-tier models aren't primarily shaped by SFT anymore. They're shaped by reinforcement learning at scale, specifically, methods like RLHF, RLAIF, and increasingly, reinforcement learning with verifiable rewards (RLVR), where models are trained on problems with objectively checkable answers (math, code execution, formal reasoning). The key characteristic of RL training is that it's on-policy: the model must generate its own rollouts, receive reward signals, and update from those. The majority of the compute cost in RL is inference and those generations have to come from the model being trained, not from a teacher model's API.

This is a hard ceiling on what distillation can achieve. You can use API outputs to build a decent SFT initialization. You can collect rubric-based grading data (as DeepSeek did) to bootstrap a reward model. But to actually run the RL loop that shapes the model's highest-level reasoning and planning capabilities, you need your own cluster, your own rollouts, your own reward signal. That work can't be outsourced to Claude's API.

Anthropic's MiniMax case , 13 million exchanges targeting agentic coding and tool use, is the most plausible scenario for meaningful capability transfer. Agentic behavior is hard to synthesize from scratch and represents a genuine differentiator in Claude's current capability profile. But even there, the question is how much of MiniMax's agentic capability ultimately came from SFT on Claude outputs versus their own post-training pipeline. That's not a question Anthropic's blog post answers, because it can't: the counterfactual is unobservable.

The Uncomfortable Symmetry

Let's be direct about something the "distillation attack" framing obscures: the entire modern AI training stack runs on synthetic data from stronger models, and everyone knows it.

- OpenAI used GPT-4 outputs to build instruction-following datasets for GPT-3.5 and fine-tuned variants.

- Meta's LLaMA derivatives were trained partly on ChatGPT-generated data (before OpenAI cracked down).

- Academic labs (Allen AI, EleutherAI) have used API-generated synthetic data extensively.

- Virtually every "open" model fine-tune you've seen on Hugging Face in the past two years has some Claude or GPT-4 data in it.

The practice of using a stronger model's outputs to train a weaker one is not a fringe behavior. It is, as Nathan Lambert (Allen AI) puts it in his blog, arguably the single most useful method AI researchers use day-to-day to improve models. What separates "legitimate synthetic data" from "distillation attack" in Anthropic's framing comes down to three things: consent, scale, and intent. The Chinese labs failed on all three: fraudulent accounts, tens of millions of exchanges, and explicit capability extraction goals. That's a real distinction.

But the field should be honest that it's a distinction of degree and method, not of kind.

What the Safety Argument Actually Gets Right

The strongest version of Anthropic's case isn't competitive: it's about alignment tax and capability transfer asymmetry.

Frontier AI labs spend enormous resources embedding safety behaviors into their models: RLHF fine-tuning on human preference data, constitutional AI methods, red-teaming, staged deployment with behavioural monitoring. These aren't features bolted onto a capability model. They're baked into the training process in ways that are difficult to cleanly separate from the underlying capabilities.

When you distill from a model like Claude via SFT on API outputs, you don't automatically inherit that safety work. You get the behavioural surface, the response style, the reasoning patterns, the format, but the deeper alignment properties are fragile in transfer. They depend on specific training dynamics (the KL penalty in RLHF, the reward model's calibration, the particular human feedback distribution) that aren't replicated just by imitating outputs. A model trained on Claude's outputs to generate plausible-sounding, well-reasoned responses can exhibit Claude-like behavior on the surface while being much easier to jailbreak on refusals that require deeper alignment to maintain.

This is the real asymmetry: capabilities transfer more robustly than safeguards. And for models that are then deployed in military, intelligence, or mass-scale civilian contexts as the distilled models from these labs likely will be: that gap matters.

This argument doesn't require believing in American AI exceptionalism. It requires believing that alignment is hard, that it doesn't travel for free in API outputs, and that capable models without careful alignment work are genuinely more dangerous. That's a defensible claim.

The Unstable Equilibrium

Here's the honest state of play: the AI industry has built an arrangement that is structurally impossible to maintain.

Frontier models are simultaneously the most commercially valuable software ever created and fully accessible to anyone with a credit card via an API. The same capabilities that underpin billion-dollar enterprise contracts can be probed, extracted, and approximated by anyone with enough patience and proxy infrastructure. No detection system will close that gap entirely. It's cat-and-mouse on an asymmetric playing field, where the attacker only needs to find the signal once.

The stable end states are limited. Either frontier models become effectively closed, ie. API access heavily restricted, reasoning traces hidden by default (Gemini already flipped this switch), first-party only for the most capable systems, or the industry accepts that capability diffusion is inevitable and invests instead in the thing that doesn't transfer easily: the safety and alignment work that makes capable models trustworthy.

Anthropic's detection and response is a reasonable short-term move. Naming the labs publicly is a reasonable escalation. But the deeper problem, that the value in frontier models can be partially extracted through carefully designed API calls, isn't solved by better fraud detection. It's solved by deciding what frontier AI is actually for, and building the access and incentive structures that match that answer.

That's the debate the industry hasn't had yet. Distillation attacks are just forcing it into the open.

Sources:

- Anthropic, "Detecting and Preventing Distillation Attacks" (Feb 2026)

- Nathan Lambert, "How Much Does Distillation Really Matter for Chinese LLMs?" Interconnects AI (Feb 2026)

- Hinton, Vinyals & Dean, "Distilling the Knowledge in a Neural Network" (2015)