What Is an MCP Gateway? A Guide for Production AI Agents

An MCP gateway centralizes tool discovery, access control, and execution for AI agents using Model Context Protocol. Learn how it works and why it matters in 2026.

An MCP gateway is a centralized infrastructure layer that sits between AI agent clients and the Model Context Protocol (MCP) servers they connect to. Instead of every agent managing its own MCP server list, credentials, and tool catalogs, an MCP gateway exposes all connected tools through a single endpoint, enforces governance policies, and provides a complete audit trail of every tool execution. As AI agents move from prototypes into production and the number of MCP servers per agent grows from a handful to several dozen, an MCP gateway becomes the difference between a manageable, governable agent estate and an ungoverned sprawl of credentials, configurations, and token costs. Bifrost, the open-source AI gateway built by Maxim AI, was designed for exactly this control point, combining native MCP support with virtual-key governance, tool filtering, and a Code Mode execution path that has been shown to reduce input tokens by up to 92.8% at scale.

Why MCP Created the Need for a Gateway

The Model Context Protocol was introduced by Anthropic in November 2024 as an open standard for connecting AI assistants to the systems where data lives, including content repositories, business tools, and development environments, with the explicit goal of replacing fragmented one-off integrations with a single protocol. The architecture is straightforward: developers can either expose their data through MCP servers or build AI applications (MCP clients) that connect to these servers. Within twelve months, the Linux Foundation announced the formation of the Agentic AI Foundation, and Anthropic donated MCP to the foundation as a vendor-neutral standard.

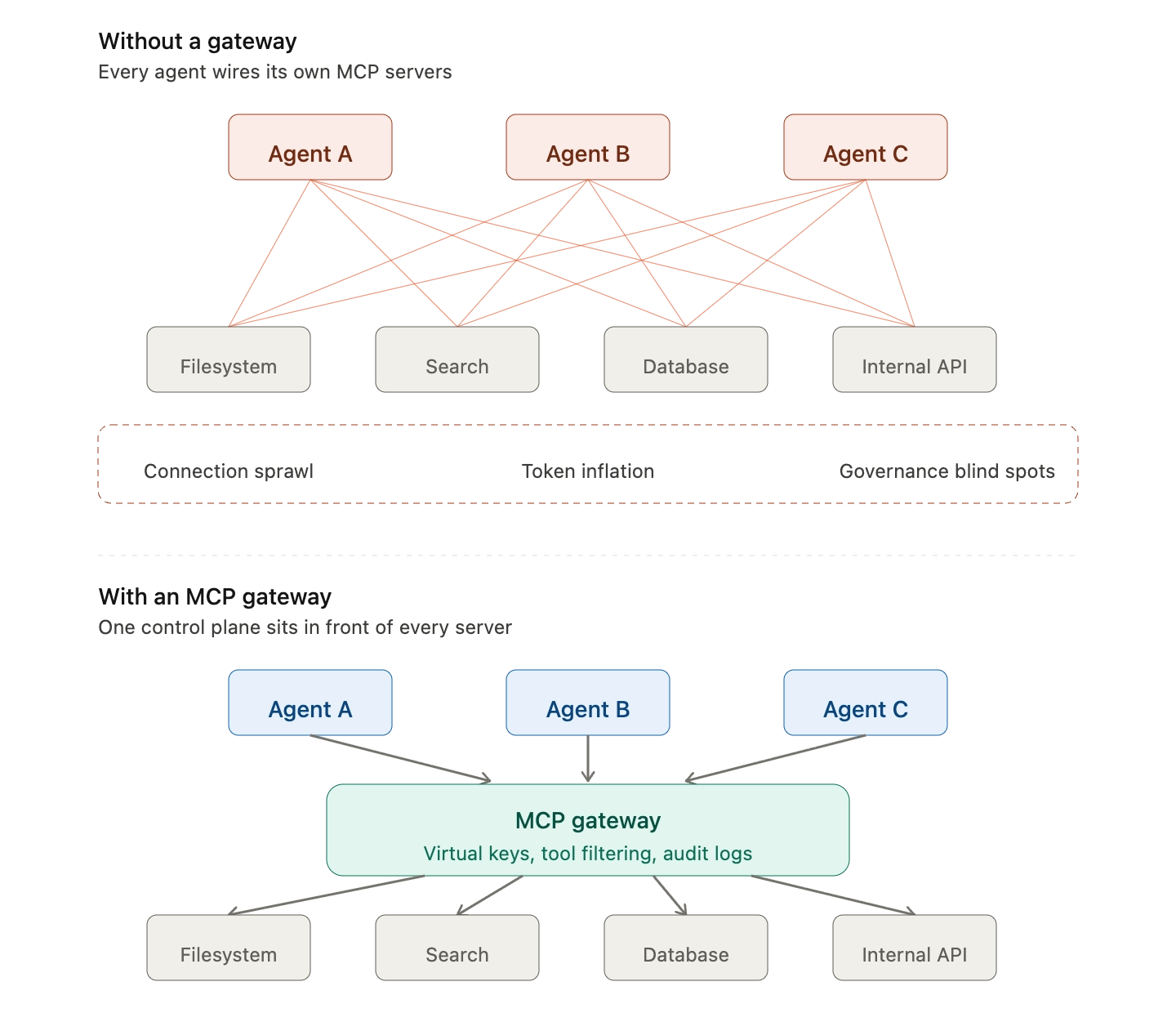

That adoption curve created a new operational problem. Agents that worked fine with two or three MCP servers started showing strain once teams connected ten, twenty, or fifty. The pain points fall into three buckets:

- Connection sprawl: Every agent maintains its own list of MCP server endpoints, transports (STDIO, HTTP, SSE), and credentials.

- Token inflation: Default MCP clients inject every tool definition from every connected server into the model's context on every request.

- Governance blind spots: Without a central control plane, there is no consistent way to filter which tools a given consumer can call, set budgets, or audit what executed.

An MCP gateway is the infrastructure response to those three problems.

What an MCP Gateway Actually Does

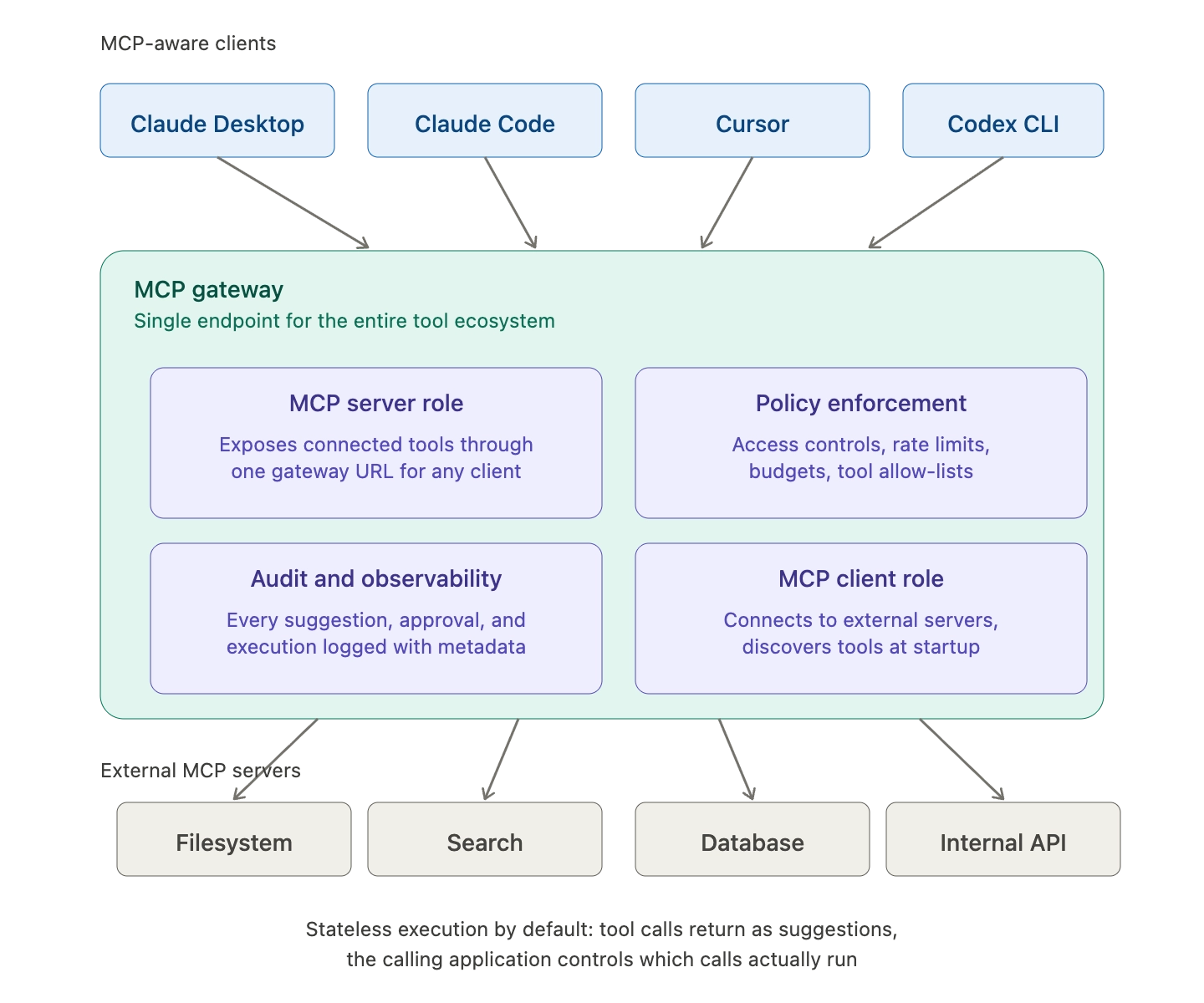

A production-grade MCP gateway plays four distinct roles in the agent stack:

- MCP client: Connects to external MCP servers (filesystem, search, databases, custom APIs) and discovers their capabilities at startup.

- MCP server: Exposes the entire connected tool ecosystem through a single gateway URL that any MCP-aware client (Claude Desktop, Claude Code, Cursor, Codex CLI) can point at.

- Policy enforcement point: Applies access controls, rate limits, budget caps, and tool allow-lists before any tool executes.

- Audit and observability layer: Logs every tool suggestion, approval, and execution with full metadata for compliance and debugging.

Bifrost is built to act as all four simultaneously. Bifrost's MCP gateway auto-discovers tools from any MCP-compatible server, injects them into chat completion requests, and returns tool suggestions back to the calling application, all while keeping execution stateless by default so applications retain full control over which calls actually run.

The Tool Sprawl Problem MCP Gateways Solve

The cost of an ungoverned MCP estate is not theoretical. Independent security analysis has documented more than 10,000 active public MCP servers, alongside seven concrete enterprise security risks tied to that growth: sensitive data exfiltration, unauthorized agent actions, overprivileged access, supply chain exposure, missing audit trails, privilege escalation, and shadow AI sprawl.

Cloudflare, which operates MCP at company-wide scale, has been explicit about why locally hosted MCP servers are a liability: local MCP server deployments may rely on unvetted software sources and versions, which increases the risk of tool injection attacks, and they prevent IT and security administrators from administrating these servers, leaving it up to individual employees and developers to choose which MCP servers they want to run. The conclusion is straightforward: when MCP moves from a single developer's laptop to a production fleet, a gateway is the only place where consistent controls can be enforced.

Bifrost addresses this with tool filtering per virtual key, where the default behavior is deny-by-default. A virtual key with no MCP configuration sees no MCP tools at all, so access is an explicit allow-list rather than an implicit grant. Administrators configure which MCP clients and which specific tools each consumer can reach through the governance layer.

How an MCP Gateway Controls Cost at Scale

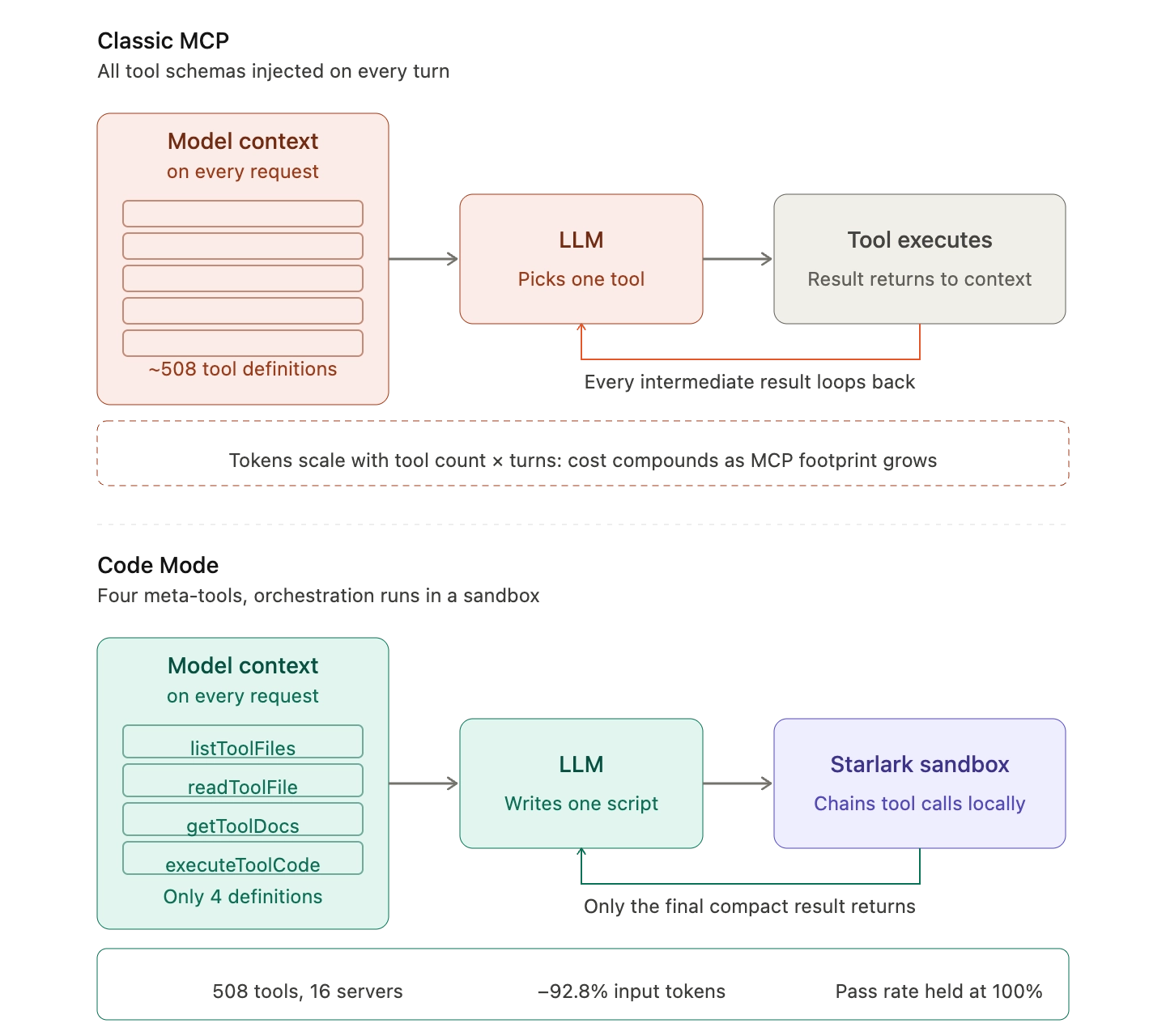

The cost problem with classic MCP is structural, not incidental. Most MCP clients load all tool definitions upfront directly into context, exposing them to the model using a direct tool-calling syntax, which means tool descriptions occupy more context window space, increasing response time and costs. Anthropic's own engineering team has documented the scale of this problem and proposed code execution as the response, noting that MCP provides a foundational protocol for agents to connect to many tools and systems, but once too many servers are connected, tool definitions and results can consume excessive tokens, reducing agent efficiency.

Bifrost's response is Code Mode, an execution path where the model writes a short Python (Starlark) script that orchestrates multiple tool calls inside a sandbox, rather than calling each tool individually through the model's tool-calling loop. Instead of injecting hundreds of tool schemas on every turn, Code Mode exposes only four meta-tools:

- listToolFiles: enumerates the MCP servers and tools that are reachable

- readToolFile: returns Python function signatures for a chosen server or tool

- getToolDocs: fetches detailed documentation for a specific tool on demand

- executeToolCode: runs an orchestration script inside a sandboxed Starlark interpreter

In controlled benchmarks scaling to 508 tools across 16 servers, this approach reduced input tokens by 92.8% with pass rate held at 100%, and the savings curve compounds as the MCP footprint grows. Full methodology and results are published in the Bifrost performance benchmarks.

MCP Gateway Security: Why a Central Control Plane Matters

MCP security is not a niche concern. In April 2025, security researchers released an analysis that concluded there are multiple outstanding security issues with MCP, including prompt injection, tool permissions that allow for combining tools to exfiltrate data, and lookalike tools that can silently replace trusted ones. The official MCP specification itself acknowledges the trust model that implementations must build on top, noting that tools represent arbitrary code execution and must be treated with appropriate caution, descriptions of tool behavior such as annotations should be considered untrusted unless obtained from a trusted server, and hosts must obtain explicit user consent before invoking any tool.

A gateway is where those abstract principles become enforceable controls. Bifrost ships several primitives explicitly designed for this:

- Stateless execution by default: Tool calls returned by the LLM are suggestions, not actions. Execution requires an explicit, separately authorized API call.

- OAuth 2.0 with PKCE: Secure delegated authentication with automatic token refresh for upstream services, scoped per end-user.

- Tool groups and virtual keys: Named collections of tools attached to virtual keys, teams, or customers, resolved at request time with no implicit access.

- Immutable audit logs: Every tool execution recorded as a first-class log entry, with tool name, server, arguments, result, latency, virtual key, and parent LLM request.

- Enterprise guardrails: Content safety integration with AWS Bedrock Guardrails, Azure Content Safety, and Patronus AI for real-time policy enforcement.

For regulated industries, these controls map directly onto compliance requirements. Teams in financial services, healthcare, and the public sector can review Bifrost's vertical-specific patterns in the healthcare AI infrastructure and financial services pages.

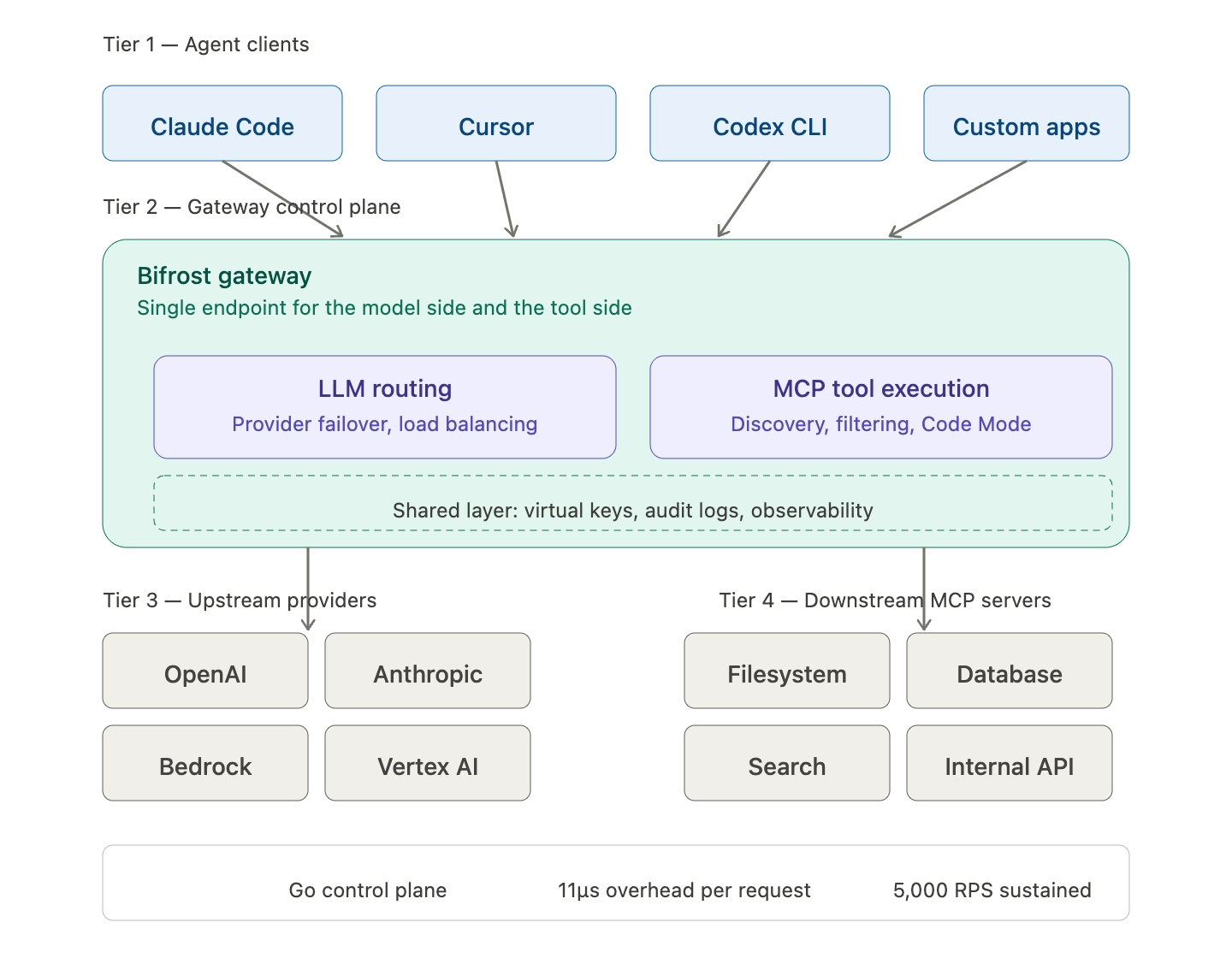

MCP Gateway Architecture: Where It Sits in the Agent Stack

An MCP gateway is a horizontal layer, not a replacement for any specific component. It sits between:

- Upstream: AI agent clients (Claude Code, Cursor, Codex CLI, custom applications) and the LLM providers they use.

- Downstream: MCP servers exposing tools, data sources, and APIs (filesystem, databases, internal services, third-party integrations).

The same gateway typically also handles LLM routing, fallbacks, and observability for the model side of the same workloads. An MCP gateway becomes essential, functioning as a production LLM gateway that centralizes tool discovery, routing, governance, and execution so workflows remain predictable and debuggable. Bifrost is built on this principle: a single Go-based control plane adds only 11 microseconds of overhead per request at 5,000 RPS, while consolidating provider routing, MCP tool execution, and governance into one deployment.

Wiring existing MCP clients into Bifrost requires a single configuration change: point the client's MCP endpoint at the gateway URL. Every connected MCP server becomes reachable through that single URL, scoped by whichever virtual key is in use. The Bifrost Claude Code integration covers this setup for one of the most common agent surfaces.

When to Adopt an MCP Gateway

The decision point is usually a function of three signals:

- Server count: Beyond three MCP servers, the token-cost and tool-selection problems become noticeable. Beyond ten, they become unavoidable.

- Team count: As soon as more than one team consumes MCP tools, divergence in configuration, credentials, and policies starts. A gateway is the only durable answer.

- Production readiness: Any agent moving from prototype to a customer-facing or revenue-affecting workflow needs auditable tool execution. Local MCP servers cannot provide that.

MCP itself is now governed under the Linux Foundation, with broad cross-vendor adoption from OpenAI, Google, Microsoft, AWS, and Cloudflare signaling that the protocol is here to stay; the operational question is no longer whether MCP, but how to run it safely.

Start with the Bifrost MCP Gateway

An MCP gateway turns the Model Context Protocol from a useful integration standard into infrastructure that scales. Bifrost provides a single control plane for MCP tool discovery, governance, audit, and cost optimization, with native Code Mode support that cuts token usage by up to 92% as the tool surface grows, and an open-source core under Apache 2.0. To see how the Bifrost MCP gateway fits into your production agent stack, book a demo with the Bifrost team.