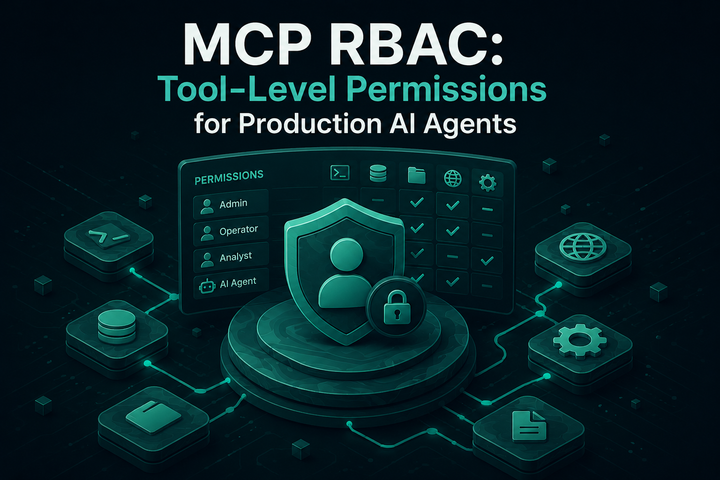

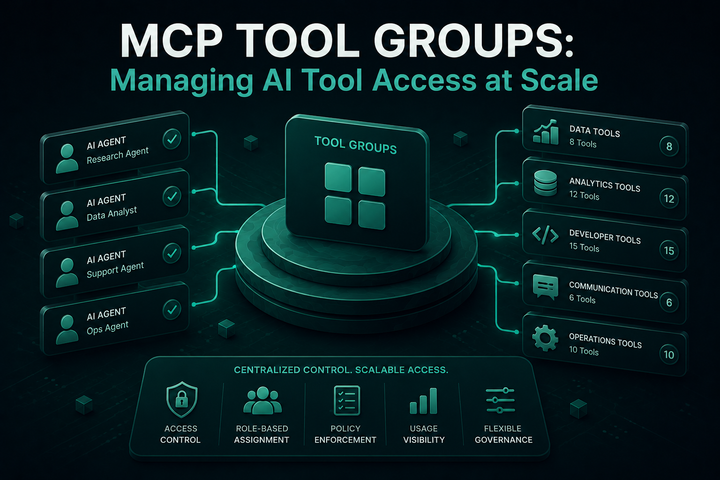

MCP Tool Groups: Managing AI Tool Access at Scale

Bifrost MCP Tool Groups let platform teams govern MCP tool access across teams, customers, users, and providers from a single named policy.

Once an MCP deployment moves past a handful of virtual keys, managing tool access one key at a time stops working. A new team needs the same database